Crypto Performance Attribution: From Luck to Skill

Learn how to use performance attribution to analyze your crypto trading. Go beyond PnL to understand if your gains come from luck or skill. Guide for traders.

May 23, 2026

Wallet Finder

April 8, 2026

An attacker slips past the first layer of controls and starts feeling around your environment. They check for an exposed file share, an overlooked admin panel, a stale SSH service, or credentials left where they should not be. Instead of reaching something valuable, they touch a decoy built to look real. You get the alert at the moment intent becomes visible.

That is the job of honey pot software. It adds a high-confidence signal that firewalls, EDR, SIEM rules, and wallet research tools do not always produce on their own. If someone interacts with a system that has no legitimate users, that activity deserves attention.

A well-placed decoy catches intent early because modern attacks often start with quiet reconnaissance, credential testing, and lateral movement. The trade-off is straightforward. Good deception tooling is believable, low-noise, and easy to maintain. Poorly deployed decoys get fingerprinted fast or create alert fatigue.

That same logic now matters on-chain.

In crypto, the trap often targets the defender. Scammers use token contracts, liquidity tricks, and sell restrictions to lure buyers into positions they cannot exit cleanly. For a DeFi trader, a honeypot is not a sensor you deploy. It is a trap you need to detect before you swap. That is why this list combines classic deception platforms with on-chain analysis tools. The connection is practical: both help you identify malicious intent before the loss happens.

The right choice depends on the job. A security team may need enterprise deception with identity lures, lateral movement detection, and response workflows. A smaller team may want an open-source sensor that can sit unobtrusively on a branch network. A trader may only need a fast contract check before touching a fresh token launch or a memecoin running on hype.

Deception has moved well beyond lab experiments. Used well, it gives defenders and traders the same advantage. A cleaner signal, earlier warning, and fewer bad assumptions.

Honeypot.is is the fastest tool on this list to use, and for DeFi traders it is often the right first stop.

Paste in a token contract. You get a quick read on whether the token behaves like a classic crypto honeypot, meaning buyers can enter but may not be able to exit normally. That simple workflow is exactly why it is useful. When traders are moving fast, friction kills discipline. Honeypot.is reduces friction.

This is not traditional network honey pot software. It is smart-contract risk screening. But the underlying idea is the same: detect a trap before it costs you.

It works well when you are checking:

The clean result format helps newer traders, but it also helps experienced traders who want a fast reject signal.

What works:

What does not:

Practical rule: if a token fails on Honeypot.is, stop. If it passes, keep investigating.

For traders who want a plain-English primer on the scam pattern itself, this guide on what a honeypot in crypto means is worth reading before you trust any single scanner.

Token Sniffer helps answer a different question than a basic honeypot checker. Instead of asking only, "Can I sell this token right now?", it helps you examine whether the contract is built in ways that put outside buyers at a disadvantage.

That distinction matters in DeFi. Plenty of bad trades are not classic sell traps. They come from risky ownership controls, copied scam code, ugly liquidity setups, or token mechanics that give the deployer too much room to change the rules after buyers enter.

I use Token Sniffer after the first pass, not as the first pass.

Its scoring model and red-flag layout are useful because they force a more disciplined read of the contract. A token can look clean on social, pick up early volume, and still carry the same structural warning signs that defenders watch for in traditional deception environments. The surface looks safe. The underlying setup says otherwise.

A practical workflow is simple:

That sequence works because each tool answers a different problem. Token Sniffer is strongest in the middle. It filters out tokens that are technically tradable but still shaped like scams.

The trade-off is interpretation. A decent score does not mean a token is safe. It means the scanner did not find enough known warning patterns to rate it worse. Scam teams know that too. They rename functions, copy cleaner contracts, spread holdings across wallets, or delay hostile changes until enough buyers are in.

Traditional honeypots and on-chain scams rely on the same human weakness. People trust what looks normal. DeFi traders who want the crypto version explained clearly should read this guide on what a honeypot in crypto is. The defensive lesson is the same in both worlds. Do not trust appearances without checking how the system is controlled.

Use Token Sniffer to pressure-test three things:

For newer traders, Token Sniffer teaches what bad contract patterns look like. For experienced traders, it is a fast filter that saves time before manual review. It does not replace reading wallet flows or watching the team. It helps you decide which tokens deserve that effort.

An attacker grabs a credential that should never be touched. A curious insider opens a file that nobody should know exists. A leaked cloud key gets used from the wrong place. Those are the moments Thinkst Canary is built for.

What makes Canary stand out is not feature sprawl. It is the speed from deployment to useful signal. Security teams can place believable decoys across endpoints, servers, shares, and cloud services, then add Canarytokens for files, URLs, credentials, and API secrets that should stay dormant unless someone is snooping or already inside.

That operating model fits lean teams well. In practice, the hardest part of deception is rarely the alert itself. It is getting enough coverage without creating a maintenance project your team cannot sustain. Canary keeps the setup light and the alerts high-confidence, which is why a lot of defenders accept its opinionated design.

There is a useful lesson here for crypto traders too. On-chain honeypot scams hide the exit. Traditional deception flips that idea and plants an interaction that should never happen. The principle is the same. Build around intent, not appearance. In a SOC, that means decoy assets. In DeFi, that means testing whether a token, permission model, or liquidity path behaves the way it claims to before capital is at risk.

Canary trades flexibility for speed. That is usually the right trade if the goal is to catch real activity with minimal tuning. It is less attractive for teams that want to hand-build deceptive services, customize low-level behavior, or run large research-style environments.

Good deception should stay quiet until it matters, then be obvious. Thinkst gets that part right. It is not the cheapest way to blanket a very large environment, but it is one of the few products that security teams regularly keep running instead of abandoning after the pilot.

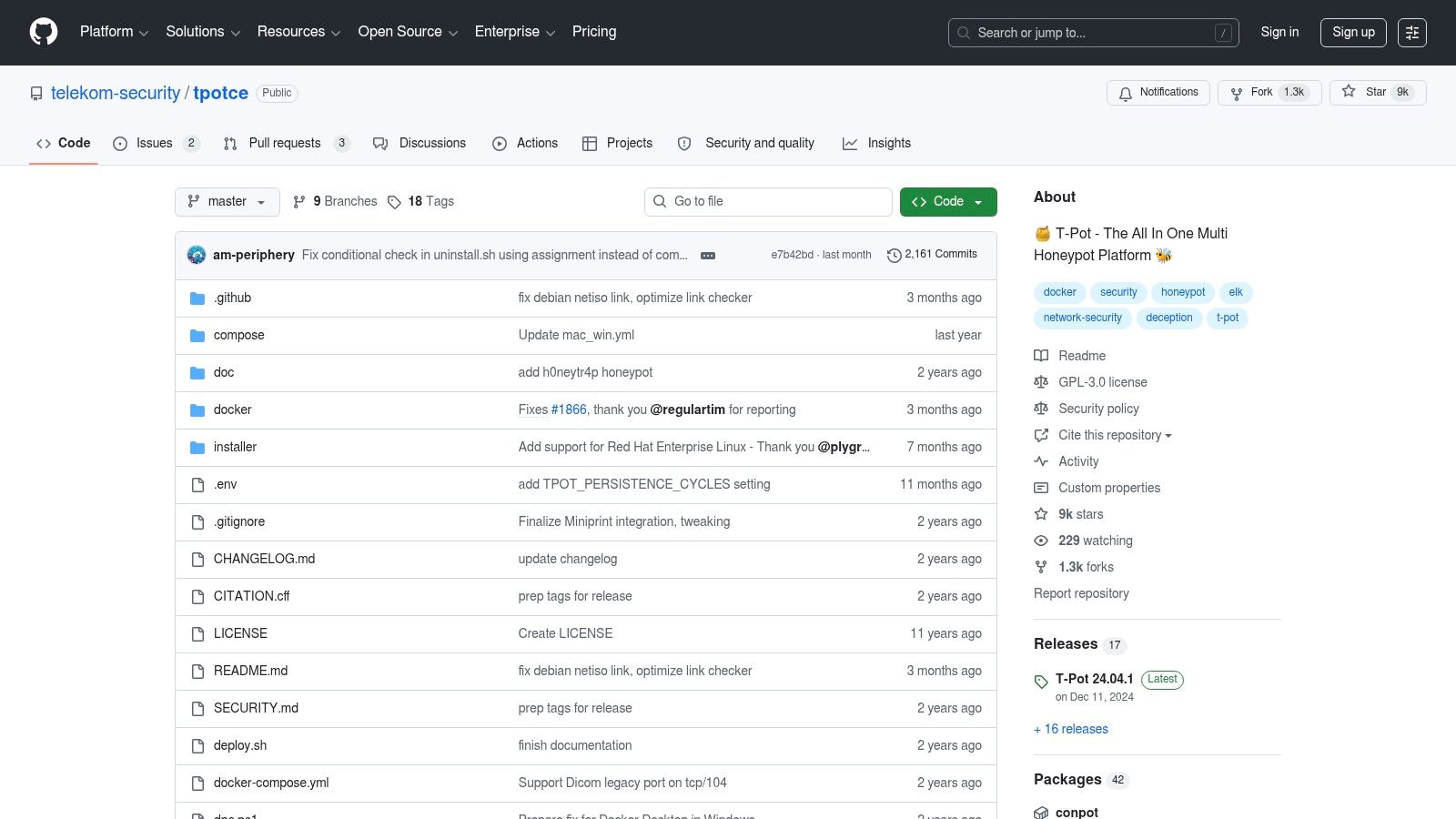

T-Pot is what I recommend to people who want to learn by collecting real attack noise.

It is not a polished enterprise deception platform. It is a bundled open-source environment that lets you stand up multiple honeypots, collect telemetry, and view it with built-in dashboards. For labs, training, and security research, that is a huge advantage.

The easiest mistake with honey pot software is to buy or deploy something you do not understand. T-Pot forces you to understand the moving parts.

You see the protocols, the logs, the dashboards, the traffic patterns, and the operational trade-offs. You also learn quickly that internet background radiation is relentless. Outpost24 ran 20 honeypots across North America, Asia, Europe, and Oceania and captured data from over 42 million attack attempts. That scale is the point. Attackers and botnets do not wait for your team to be ready.

T-Pot is not “install once and forget it” software. It needs care.

You still have to:

There is another hard truth. Advanced attackers often fingerprint low-interaction systems. Tools such as nmap, p0f, httprint, curl, netcat, Shodan, Censys, and custom scripts are commonly used to spot odd banners, stack quirks, and behavior mismatches, as outlined in Palo Alto Networks’ overview of honeypot detection and evasion tactics: attacker tooling for fingerprinting honeypots. T-Pot teaches that lesson quickly.

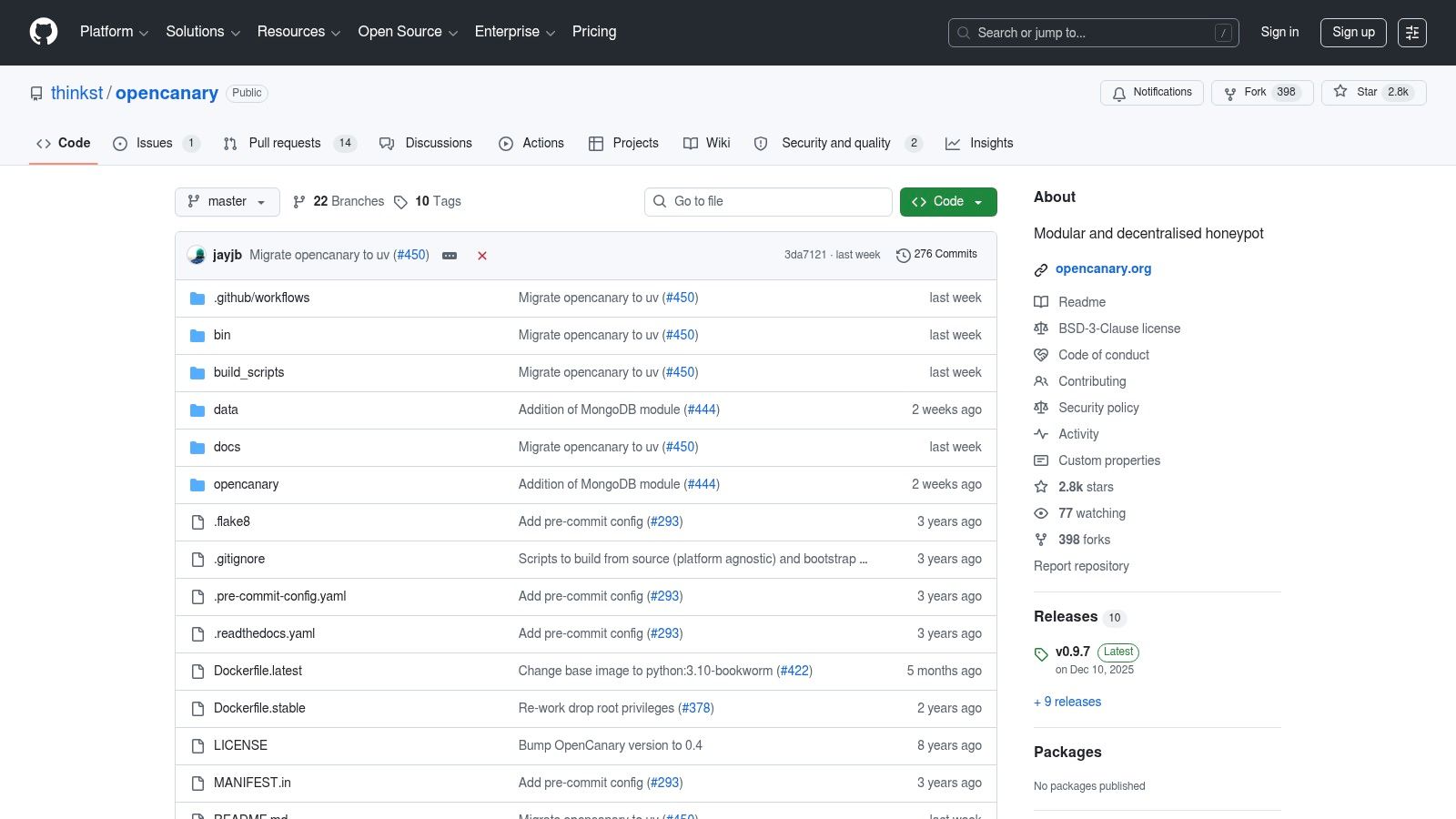

OpenCanary is the open-source answer for teams that want lightweight, useful deception without paying for a full commercial platform on day one.

It emulates multiple services, runs comfortably on small systems, and sends alerts in familiar ways. That combination makes it one of the best “just start somewhere” options in this category.

OpenCanary works best when you place simple decoys in places that should be quiet. A fake SSH service on the wrong subnet. An SMB service no one should touch. A low-cost sensor on a branch segment. The point is not realism at all costs. The point is catching interaction where legitimate interaction should not exist.

That is why the low overhead matters. You can deploy it widely enough to be useful.

A few practical fits:

You are trading polish for control.

You do not get the same fleet management, administrative smoothness, or commercial support model you get from the full Canary product. You own the tuning, scaling, and correlation work. For many teams, that is acceptable. For understaffed teams, it can become shelfware.

The old research still matters here. A Naval Postgraduate School study built a 72-metric vector to measure honeypot realism and found a fake system statistically convincing against real ones, with mean standard error of 0.857 and SD 0.280 across 10 systems. The practical takeaway is simple: realism is measurable, and obvious shortcuts can expose your decoys. OpenCanary works best when you place it carefully and make it fit its surroundings.

Deploy fewer sensors well before you deploy many sensors badly.

OpenCanary is excellent for operators who like simple building blocks and do not mind doing the integration work themselves.

A common enterprise failure looks like this: an attacker gets one valid credential, starts probing east-west paths, touches a decoy share or service, and finally gives the SOC a signal that is hard to dismiss. Fortinet FortiDeceptor is built for that moment.

It fits best in organizations that already run a lot of Fortinet infrastructure. This factor determines its adoption. FortiDeceptor creates decoys and lures across IT, OT, and IoT environments, then ties those detections into the wider Fortinet control stack. If FortiGate, FortiAnalyzer, FortiSOAR, or related tools already anchor your workflow, the operational path is much clearer.

The product earns its keep when deception events trigger action instead of adding one more dashboard alert.

That usually shows up in a few practical ways:

That matters for crypto-connected businesses too. The same deception principle that catches an intruder touching a fake Windows share also helps traders and security teams spot on-chain traps. In both cases, the defender watches for interaction where legitimate behavior should be rare or nonexistent. DeFi users use honeypot checks to avoid scam contracts that let buyers in but block sellers. Enterprise teams use network and credential decoys to catch unauthorized movement. Different environment, same logic.

The trade-off is ecosystem dependence. FortiDeceptor is easier to justify when response, logging, and enforcement already sit inside Fortinet. In a mixed stack, integration work gets heavier and the product can feel more like a platform commitment than a standalone deception purchase.

Pricing also takes extra effort to evaluate because it is typically quote-driven. Teams comparing FortiDeceptor with lighter tools such as T-Pot or OpenCanary should be honest about the goal. If the goal is broad deception with automated containment inside an existing Fortinet estate, the premium may be reasonable. If the goal is to deploy decoys quickly and learn from the alerts, a smaller and less integrated option may be the cleaner choice.

My practical read is simple. FortiDeceptor works best for mature teams that want deception tied directly to enforcement, especially across complex enterprise networks. If you are outside the Fortinet ecosystem, buy it only after you map the response workflow end to end.

An attacker logs in with a real account, uses an approved device, and starts probing internal apps that look ordinary at first glance. Basic alerting can miss that sequence because nothing looks obviously malicious in isolation. Zscaler Deception is built for that kind of intrusion, where the giveaway is interaction with something that should never be touched.

That matters in enterprise networks and on-chain security for the same reason. Deception works when you place believable traps inside normal-looking workflows, then watch for the actor who cannot resist testing them. In DeFi, traders use honeypot analysis to avoid token contracts designed to trap capital. In enterprise environments, defenders use decoys, credentials, and planted services to catch an intruder who is already past the front door.

Zscaler’s advantage is context. A decoy hit means more when it is tied to user identity, device posture, private app access, and cloud workload telemetry inside the same control plane. Teams already running Zscaler can investigate faster because the deception signal arrives with policy and session context, not as a detached alert from a standalone sensor.

The strongest fit is usually straightforward:

There is a real trade-off. Zscaler Deception makes the most sense as part of a broader Zscaler deployment, not as an isolated buy for teams that only want a few decoys. Smaller teams can still benefit from deception, but a platform-centered approach often brings more setup, more vendor dependence, and more budget scrutiny than lighter tools.

My practical read is that Zscaler Deception is a strong option for enterprises that already trust Zscaler as a policy and visibility layer. If that foundation is not in place, test whether the extra context will improve response before you commit.

SentinelOne Singularity Identity inherited a lot of its deception strength from Attivo Networks, and that history still shows in the product’s identity-first approach.

This is less about dropping a fake Linux box on a subnet and more about placing deceptive credentials, AD artifacts, and breadcrumbs where attackers doing lateral movement are likely to touch them.

A lot of attacks become dangerous only after the first foothold. Serious damage often comes during credential harvesting, privilege escalation, and movement between systems. Identity-focused deception is built for that stage.

If you already run SentinelOne XDR, the operational advantage is obvious:

That kind of consolidation matters more than feature checklists in practice.

The product naming and packaging history can still create confusion. Teams evaluating it need to verify exactly which modules they are buying and how the deception capabilities are surfaced in the current platform.

Also, if your goal is broad traditional network emulation, other products may feel more direct. SentinelOne’s strength here is identity-centric detection.

This category is also where AI-adaptive deception gets discussed a lot and demonstrated less. One industry article describes AI-generated honeypots as systems that learn from interactions and adapt in real time, but also notes the lack of public independent benchmarks, despite vendor claims of 50-70% better telemetry. I would treat that as directional, not proven. Practically, clean deployment and strong placement still beat buzzwords.

SentinelOne Deception is worth attention if identity abuse is your main concern and your XDR program already runs there.

Acalvio is built for organizations that want deception at scale, not just a few decoys sprinkled around the network.

Its pitch is autonomous generation and placement of deceptive assets across endpoints, data centers, and cloud environments, with identity lures layered in. That is attractive in large, distributed estates where manual placement does not scale.

Acalvio is strongest when the environment is too large for handcrafted deception to keep up.

That includes:

The larger and more dynamic the environment, the more useful automation becomes.

Products in this tier need a mature operating model behind them. If your team does not have good asset understanding, identity hygiene, and incident handling discipline, an advanced deception platform can become a fancy alert generator.

I would also separate automation from realism. Automatically placing decoys is helpful. Automatically placing convincing decoys is harder. Attackers still test what they touch.

That lesson matters in crypto too. DeFi traders often focus on token-level checks and ignore behavioral context. The same mindset behind ShadowPlex applies on-chain: one signal is rarely enough. You want correlated clues. Contract behavior, wallet clustering, liquidity movement, deployer patterns, and timing all matter together. That is how deception thinking becomes trading defense.

Acalvio is not the entry-level choice. It is for teams that already know why they want deception and have enough internal maturity to use the telemetry well.

KFSensor is the old-school practical option on this list.

It is Windows-centric, straightforward, and useful for small deployments where you want a simple honeypot without standing up a full deception platform. That makes it a reasonable fit for SMBs, branch offices, labs, and teams that still operate a lot of Windows infrastructure and want a single-server answer.

Not every environment needs distributed deception, identity breadcrumbs, or cloud-native policy hooks. Sometimes you just want a Windows-friendly decoy service set with central logging and manageable upkeep.

KFSensor works in those cases because it focuses on:

That combination still has value, especially for teams with limited staff.

The product feels more traditional than modern platform competitors. That is not always a flaw. It just means you should buy it for the right reason.

Choose KFSensor if:

Skip it if:

KFSensor is not the most advanced tool here. It is one of the most approachable. In many environments, approachable beats ambitious software that never gets deployed properly.

| Product | Core features | UX / Quality (★) | USP (✨🏆) | Target audience (👥) | Pricing / Value (💰) |

|---|---|---|---|---|---|

| Honeypot.is | Instant static contract scan (ERC-20/BEP-20), buy/sell tax, liquidity link | ★★★★ | ✨ Quick, binary honeypot check, 🏆 first-line defense | 👥 Retail traders & token discoverers | 💰 Free basic checks |

| Token Sniffer | Automated 0–100 audit score, ownership/liquidity checks, scam DB | ★★★★ | ✨ Nuanced risk scoring & red-flag highlights, 🏆 educates users | 👥 Traders & on-chain researchers | 💰 Free web tool |

| Thinkst Canary | Hardware/VM/cloud canaries, Canarytokens, fleet console | ★★★★★ | ✨ High-signal alerts with low noise, 🏆 enterprise-grade deception | 👥 SOCs, lean security teams | 💰 Commercial (paid) |

| T-Pot (Telekom Security) | Multi-honeypot distro, ELK dashboards, Dockerized stack | ★★★★ | ✨ Turnkey research bundle for global telemetry, 🏆 community-driven | 👥 Researchers, labs, hobbyists | 💰 Free (open source; infra costs) |

| OpenCanary | Lightweight multi-protocol honeypot, low footprint, extensible | ★★★★ | ✨ Raspberry Pi/DIY friendly, 🏆 easy to customize | 👥 SMEs, labs, DIY security projects | 💰 Free (open source) |

| Fortinet FortiDeceptor | Deception VMs/lures, Fortinet Fabric integration, playbooks | ★★★★ | ✨ Automated isolation via Fabric, 🏆 strong IR automation | 👥 Fortinet-centric enterprises | 💰 Quote-based enterprise |

| Zscaler Deception | Cloud decoys, AD/cloud/endpoint coverage, Zero Trust tie-in | ★★★★ | ✨ Identity-aware deception in Zero Trust, 🏆 cloud-native approach | 👥 Cloud-first orgs on Zscaler | 💰 Commercial (undisclosed) |

| SentinelOne Deception | Identity lures & AD decoys, Singularity XDR integration | ★★★★ | ✨ Identity-centric detections into XDR, 🏆 consolidated alerts/response | 👥 SentinelOne XDR customers | 💰 Module/quote pricing |

| Acalvio ShadowPlex | AI-driven decoy creation, AD deception, threat intel enrichment | ★★★★ | ✨ Autonomous, scalable deception & intel, 🏆 analyst-validated | 👥 Large enterprises & MSSPs | 💰 Enterprise pricing |

| KFSensor | Windows service emulation, GUI, central logging, lightweight | ★★★ | ✨ Simple Windows honeypot for SMBs, 🏆 low maintenance | 👥 SMBs, branch offices, labs | 💰 Affordable commercial license |

The best honey pot software changes how you think about defense. Instead of asking only how to block attackers, you also ask how to expose them when they move, probe, and test trust boundaries. That shift matters because many attacks are quiet until they are not. By the time traditional alerts become obvious, the attacker may already have credentials, internal visibility, or a path to your high-value systems.

Deception helps earlier in the chain.

For security teams, the practical starting point depends on where you are today. If you want hands-on understanding, T-Pot and OpenCanary are strong first deployments. They teach you placement, logging, and realism fast. If you run a lean team and want high-signal alerts without much engineering overhead, Thinkst Canary is one of the easiest products to justify. If your organization already lives inside Fortinet, Zscaler, or SentinelOne, the right choice is often the deception layer that plugs into the controls and workflows you already trust. And if you are securing a large hybrid environment with enough maturity to operationalize richer telemetry, Acalvio is built for that level of ambition.

The biggest mistake is treating deception like a magic sensor. It is not. Placement matters. Isolation matters. Alert routing matters. So does realism. Low-effort deployments often get touched by noisy scanners, but skilled operators may fingerprint them quickly if they do not fit the environment. Good decoys look boring, local, and plausible.

For DeFi traders, the same core lesson applies in reverse. You are the one trying not to step into someone else’s trap. A token that looks active, liquid, and socially validated can still be engineered to punish exits or mislead buyers. That is why tools like Honeypot.is and Token Sniffer belong in a trading workflow, not as final truth, but as fast filters. They help you reject obvious danger before you spend more time on deeper on-chain analysis.

In this area, traditional cybersecurity thinking and on-chain trading research overlap. In both worlds, attackers exploit trust in appearances. Defenders do better when they test assumptions. On a network, that means planting believable lures. On-chain, it means challenging contract behavior, deployer intent, and wallet activity before committing capital.

If your work touches both domains, keep the workflows connected. A security team can use honey pot software to study attacker behavior across cloud, identity, and internal systems. A trader or analyst can use token scanners and wallet analysis to spot contract traps and suspicious participant behavior before entry. Those are different tools, but the discipline is the same: verify what looks safe.

Wallet Finder.ai can fit naturally into that second layer. Its token and wallet research views help traders inspect on-chain behavior around opportunities that may have already passed a basic honeypot check. That is useful because avoiding scams in DeFi rarely comes down to one signal.

Start simple. Place one decoy well. Run one open-source sensor where traffic should be quiet. Check one token before you ape into it. The tools in this list work best when they support a habit of skepticism, not when they replace it.

If you want a second layer after basic token checks, Wallet Finder.ai helps you research wallets, tokens, and trades in one place, including honeypot risk context for trending tokens, so you can compare contract-level warnings with actual on-chain behavior before acting.