Private Key Security: The Ultimate Trader's Guide

Master private key security with our guide for crypto traders. Learn to store keys, avoid attacks, and use advanced DeFi strategies to protect your assets.

May 22, 2026

Wallet Finder

March 4, 2026

Token-specific filtering is a way to analyze blockchain tokens using specific criteria like trading volume, wallet activity, and transaction patterns. This helps traders find opportunities faster without manually tracking thousands of tokens. Here's a quick look at the top tools for this:

These tools cater to different needs. Wallet Finder.ai is excellent for tracking successful wallets, while Altfins is perfect for chart enthusiasts. Arkham Intelligence is best for whale tracking, and CoinGecko is a good starting point for market data.

Wallet Finder.ai steps in to simplify token-specific filtering by analyzing wallet performance and trading patterns. It helps users uncover profitable opportunities within the blockchain space by focusing on real trading data rather than just market sentiment.

The platform is designed to identify wallets with a track record of consistent profits. Users can filter wallets based on metrics like profit/loss, winning streaks, and overall performance consistency. By sorting through historical data, users can spot effective trading patterns.

Wallet Finder.ai goes a step further by analyzing key trading details like entry and exit points, position sizes, and timing. These insights are paired with token metrics such as trading volume and wallet interactions, giving users a clear picture of how successful trades are executed.

Visual tools like graphs and charts showcase wallet performance trends, and advanced filtering options allow users to fine-tune their searches based on specific metrics.

To keep users informed, Wallet Finder.ai offers real-time alerts through Telegram. These notifications ensure users never miss significant moves made by wallets on their watchlist.

For those who prefer deeper analysis, the platform supports data exports in multiple formats. This feature makes it easy to review information offline or share insights with others. Together, real-time alerts and exportable data create a seamless system for making well-informed decisions.

Users can connect their own wallets to monitor performance and compare results with top traders. A customizable watchlist helps keep track of specific wallets or activities, with tailored alerts to highlight relevant actions.

This feature not only helps users identify areas for improvement but also allows them to track their progress over time.

Wallet Finder.ai operates on a freemium model. The free version offers tools for personal wallet analysis and exploration, while premium access unlocks advanced features like full wallet statistics, enhanced filtering options, and the Discover Trades tool.

In addition to wallet analysis platforms, there are several specialized tools that focus on token-specific filtering. These tools each bring their own strengths, helping traders and analysts spot opportunities using different methods and perspectives. For a data-driven edge, explore Top 7 Models for Crypto Price Prediction Using Sentiment to see how advanced analytics and emotional trends combine to forecast market movements.

Altfins leans heavily on technical analysis, offering over 100 preset filters and custom scans with more than 120 technical indicators, such as SMA, EMA, RSI, and MACD. Its AI-powered chart pattern recognition engine is designed to detect 26 trading patterns across multiple timeframes - ranging from 15 minutes to 1 day - with reported success rates as high as 78%.

The platform also provides curated trade setups for the top 65 cryptocurrencies, focusing on trends, momentum indicators, volume patterns, and support/resistance levels. A "Signals Summary" feature highlights coins that align with bullish or bearish patterns. Users can set alerts for price changes, indicators, and patterns, with notifications sent via mobile, email, or the website. Additionally, Altfins tracks cross-exchange portfolios and on-chain data, including asset movements, user activity, and Total Value Locked (TVL).

Arkham Intelligence takes a different approach by using its ULTRA AI engine to link blockchain addresses to real-world entities like funds, exchanges, or individuals. This entity-based method uncovers hidden transaction patterns and provides deeper insights into token flows. The platform updates data every 10 seconds across Ethereum, BNB Chain, Polygon, and other EVM-compatible networks, ensuring near real-time tracking of significant activity.

Arkham’s Visualizer tool maps wallet relationships and fund flows, while its Alerts feature notifies users of large transactions or movements by specific entities, making it particularly useful for tracking whale activity. A standout feature is the Intel Exchange, which allows users to trade cryptocurrency intelligence. ARKM tokens are used to facilitate bounties, auctions, and data rewards. The platform also includes an AI chatbot, Oracle, which simplifies complex on-chain data with natural-language queries.

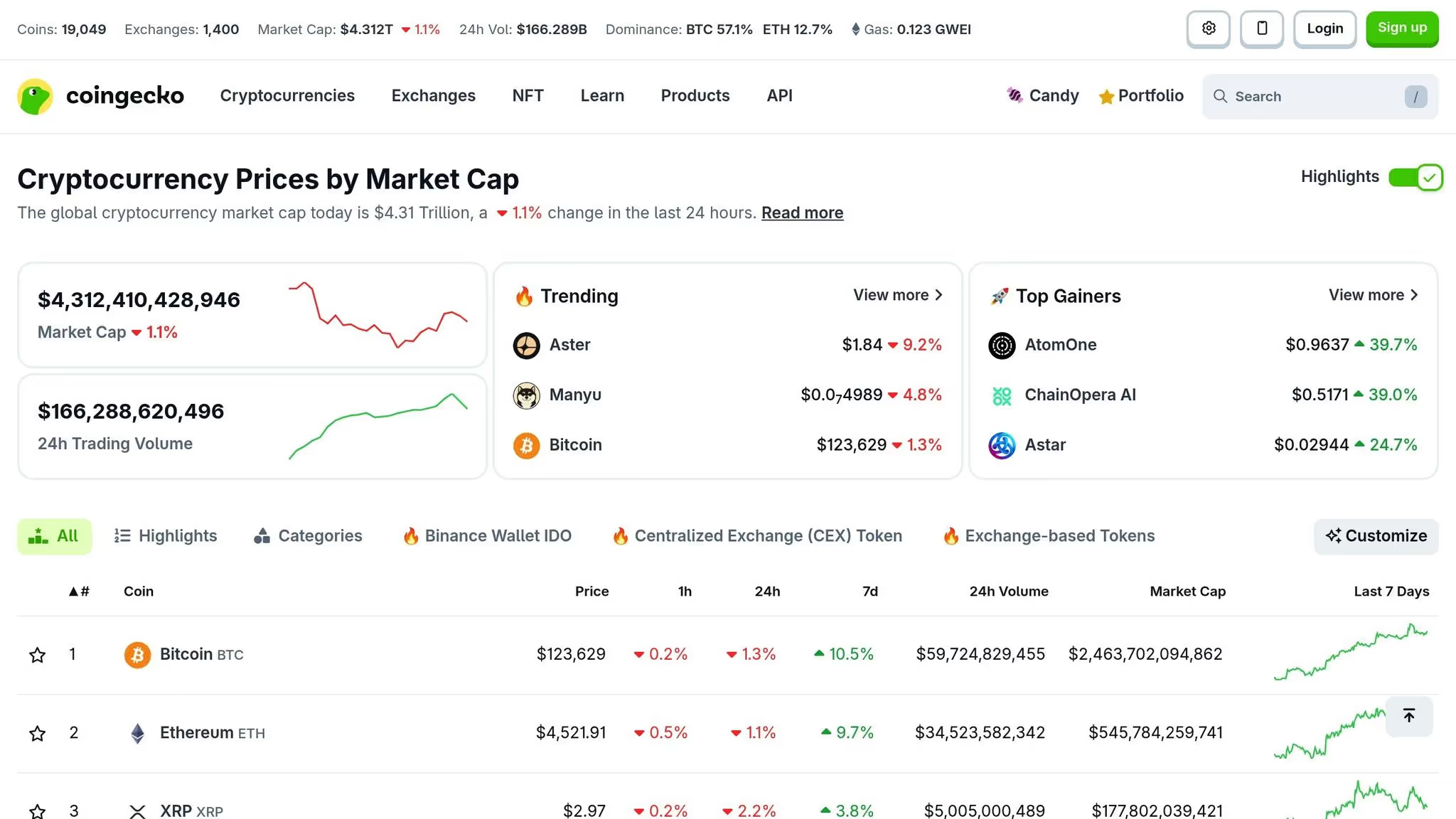

CoinGecko is well-known for its market data tools, including its token screener. This feature allows users to filter tokens based on metrics like market capitalization, trading volume, and price changes. While details about the screener’s full capabilities are limited, its broad market coverage makes it a go-to resource for traders looking for an overview of token performance.

Together, these tools provide a variety of approaches to token filtering, each catering to different needs and strategies. A detailed comparison of their features follows in the next section.

The article introduces four token filtering platforms and their headline capabilities but does not address how filtering logic itself is constructed, combined, and optimized for different screening objectives. Filter logic architecture determines whether a screening session surfaces the specific opportunities a trader is hunting or returns an unmanageable universe of false positives, and the difference between well-constructed and poorly constructed filter sets is often more consequential for trading outcomes than the choice of platform itself. Understanding how filtering systems process multi-condition queries, apply threshold logic, and sequence filter operations gives traders the technical foundation to build screening workflows that are reproducible, adjustable, and meaningfully faster than ad hoc searches.

The foundational concept in token filtering logic is the distinction between inclusion filters and exclusion filters, which appear identical in surface-level UI implementations but serve opposite functions in screening architecture. Inclusion filters define the minimum conditions a token must satisfy to appear in results, narrowing the universe downward. Exclusion filters remove tokens that meet specific disqualifying criteria, and they are most valuable when applied early in a multi-step screening sequence to eliminate noise before more computationally intensive inclusion filters run. A screening workflow that first excludes all tokens below a minimum liquidity threshold, then excludes all tokens with honeypot contract characteristics, and then applies positive signal inclusion filters runs substantially faster and returns cleaner results than a workflow that applies all filters simultaneously without sequencing, because the early exclusion steps dramatically reduce the candidate set before expensive positive-signal computations execute.

Boolean filter composition allows multiple filter conditions to be combined using AND, OR, and NOT logic, enabling precise specification of complex screening objectives that single-metric filters cannot express. A filter requiring that a token meet condition A AND condition B returns only tokens satisfying both conditions simultaneously, which is appropriate when both criteria are necessary. A filter requiring condition A OR condition B returns tokens satisfying either criterion, which is appropriate when either condition is sufficient evidence of the target characteristic. The practical application of Boolean composition in token screening is most powerful for multi-signal confirmation workflows: requiring that a token show positive smart money accumulation AND sentiment velocity above threshold AND minimum liquidity above floor value produces a much higher signal-to-noise ratio than any single condition alone, because the probability of all three conditions aligning simultaneously by noise is multiplicatively lower than the probability of any individual condition arising by noise.

Static threshold filtering applies fixed numeric cutoffs to screening criteria, such as requiring minimum 24-hour trading volume above a fixed dollar figure or minimum wallet count above a fixed number. Static thresholds are straightforward to implement and audit but suffer from a fundamental limitation: the appropriate threshold for a given filter criterion changes with market conditions. A volume threshold of $500,000 daily trading volume appropriately filters out low-liquidity tokens during normal market conditions but incorrectly excludes many legitimate early-stage tokens during broad market downturns when volume contracts across the entire token universe. Static thresholds calibrated during high-volume periods become overly restrictive during contractions, and thresholds calibrated during contractions become insufficiently selective during expansions.

Percentile-based dynamic thresholds address this by setting filter cutoffs relative to the current distribution of the metric across all tokens rather than at absolute values. Requiring that a token rank in the top 20th percentile for 24-hour volume within its market cap tier filters for relatively high-volume tokens regardless of the absolute volume levels prevailing at the time of the screen, because the threshold automatically adjusts as the overall market volume environment changes. Implementing percentile thresholds requires platforms that compute and expose distribution statistics across the full token universe in real time, which is a more demanding data infrastructure requirement than static threshold filtering but produces more consistent and meaningful results across varying market conditions.

Sensitivity analysis for filter threshold selection tests how screening results change as individual threshold values are adjusted across a range around the initial selection, which reveals whether a chosen threshold is robust to small variations or whether minor adjustments dramatically change the result set. A volume threshold whose results are stable when the value is moved plus or minus 20% indicates a robust calibration in a region where genuine signal separates from noise. A threshold whose results change dramatically with small adjustments indicates a fragile calibration at a boundary where noise and signal are mixed, which should prompt recalibration at a more stable threshold value. Running sensitivity analysis on each major filter in a multi-condition screening workflow before using the results to inform trading decisions takes minimal additional effort and substantially reduces the risk of acting on results that are artifacts of threshold selection rather than genuine market signal.

Multi-timeframe filter stacking applies the same filtering criteria across multiple time windows simultaneously, requiring that a signal be present consistently across timeframes rather than in a single period. This approach directly addresses one of the most common failure modes in token screening: a token satisfies a filter criterion in one time window due to a single anomalous event rather than a sustained pattern. Requiring that a volume filter condition be satisfied in both the 1-hour and 24-hour windows filters out tokens experiencing brief volume spikes that are not supported by sustained elevated activity. Requiring that a wallet accumulation signal be present in both the 4-hour and 48-hour windows distinguishes genuine progressive accumulation from a single large transaction that inflates the short-term signal without indicating sustained interest.

The number of timeframe layers to stack depends on the target holding period of the trading strategy. Very short-term strategies targeting 30-minute to 4-hour holding periods benefit from 2-layer stacking across the 5-minute and 1-hour timeframes, providing noise reduction without introducing the lag that longer timeframe confirmation would impose. Medium-term strategies targeting 1-day to 7-day holding periods benefit from 3-layer stacking across the 1-hour, 12-hour, and 48-hour timeframes, ensuring that signals are supported at the granularity that matters for entry timing as well as the duration relevant to the holding period. Long-term position building benefits from stacking across 7-day, 30-day, and 90-day windows, which identifies tokens showing sustained directional momentum rather than cyclical patterns that repeat and reverse within the holding horizon.

Signal confirmation windows define the period over which a filter result is considered valid before requiring reconfirmation. Token conditions change continuously, and a filter result generated six hours ago may no longer reflect current conditions even when the underlying data has not been manually refreshed. Building confirmation window expiration into screening workflows ensures that trading decisions are based on filter results within a specified freshness threshold, and that stale results are automatically flagged for reconfirmation before capital allocation. For fast-moving meme tokens and newly launched assets, confirmation window expiration of 15 to 60 minutes is appropriate. For established tokens with slower-moving fundamentals, confirmation windows of 4 to 24 hours may be acceptable. Matching confirmation window length to the expected volatility of the target token category prevents the operational risk of acting on filter results that are technically current but practically stale relative to the speed of market movement in that segment.

The article introduces four token filtering platforms and their headline capabilities but does not address how filtering logic itself is constructed, combined, and optimized for different screening objectives. Filter logic architecture determines whether a screening session surfaces the specific opportunities a trader is hunting or returns an unmanageable universe of false positives, and the difference between well-constructed and poorly constructed filter sets is often more consequential for trading outcomes than the choice of platform itself. Understanding how filtering systems process multi-condition queries, apply threshold logic, and sequence filter operations gives traders the technical foundation to build screening workflows that are reproducible, adjustable, and meaningfully faster than ad hoc searches.

The foundational concept in token filtering logic is the distinction between inclusion filters and exclusion filters, which appear identical in surface-level UI implementations but serve opposite functions in screening architecture. Inclusion filters define the minimum conditions a token must satisfy to appear in results, narrowing the universe downward. Exclusion filters remove tokens that meet specific disqualifying criteria, and they are most valuable when applied early in a multi-step screening sequence to eliminate noise before more computationally intensive inclusion filters run. A screening workflow that first excludes all tokens below a minimum liquidity threshold, then excludes all tokens with honeypot contract characteristics, and then applies positive signal inclusion filters runs substantially faster and returns cleaner results than a workflow that applies all filters simultaneously without sequencing, because the early exclusion steps dramatically reduce the candidate set before expensive positive-signal computations execute.

Boolean filter composition allows multiple filter conditions to be combined using AND, OR, and NOT logic, enabling precise specification of complex screening objectives that single-metric filters cannot express. A filter requiring that a token meet condition A AND condition B returns only tokens satisfying both conditions simultaneously, which is appropriate when both criteria are necessary. A filter requiring condition A OR condition B returns tokens satisfying either criterion, which is appropriate when either condition is sufficient evidence of the target characteristic. The practical application of Boolean composition in token screening is most powerful for multi-signal confirmation workflows: requiring that a token show positive smart money accumulation AND sentiment velocity above threshold AND minimum liquidity above floor value produces a much higher signal-to-noise ratio than any single condition alone, because the probability of all three conditions aligning simultaneously by noise is multiplicatively lower than the probability of any individual condition arising by noise.

Static threshold filtering applies fixed numeric cutoffs to screening criteria, such as requiring minimum 24-hour trading volume above a fixed dollar figure or minimum wallet count above a fixed number. Static thresholds are straightforward to implement and audit but suffer from a fundamental limitation: the appropriate threshold for a given filter criterion changes with market conditions. A volume threshold of $500,000 daily trading volume appropriately filters out low-liquidity tokens during normal market conditions but incorrectly excludes many legitimate early-stage tokens during broad market downturns when volume contracts across the entire token universe. Static thresholds calibrated during high-volume periods become overly restrictive during contractions, and thresholds calibrated during contractions become insufficiently selective during expansions.

Percentile-based dynamic thresholds address this by setting filter cutoffs relative to the current distribution of the metric across all tokens rather than at absolute values. Requiring that a token rank in the top 20th percentile for 24-hour volume within its market cap tier filters for relatively high-volume tokens regardless of the absolute volume levels prevailing at the time of the screen, because the threshold automatically adjusts as the overall market volume environment changes. Implementing percentile thresholds requires platforms that compute and expose distribution statistics across the full token universe in real time, which is a more demanding data infrastructure requirement than static threshold filtering but produces more consistent and meaningful results across varying market conditions.

Sensitivity analysis for filter threshold selection tests how screening results change as individual threshold values are adjusted across a range around the initial selection, which reveals whether a chosen threshold is robust to small variations or whether minor adjustments dramatically change the result set. A volume threshold whose results are stable when the value is moved plus or minus 20% indicates a robust calibration in a region where genuine signal separates from noise. A threshold whose results change dramatically with small adjustments indicates a fragile calibration at a boundary where noise and signal are mixed, which should prompt recalibration at a more stable threshold value. Running sensitivity analysis on each major filter in a multi-condition screening workflow before using the results to inform trading decisions takes minimal additional effort and substantially reduces the risk of acting on results that are artifacts of threshold selection rather than genuine market signal.

Multi-timeframe filter stacking applies the same filtering criteria across multiple time windows simultaneously, requiring that a signal be present consistently across timeframes rather than in a single period. This approach directly addresses one of the most common failure modes in token screening: a token satisfies a filter criterion in one time window due to a single anomalous event rather than a sustained pattern. Requiring that a volume filter condition be satisfied in both the 1-hour and 24-hour windows filters out tokens experiencing brief volume spikes that are not supported by sustained elevated activity. Requiring that a wallet accumulation signal be present in both the 4-hour and 48-hour windows distinguishes genuine progressive accumulation from a single large transaction that inflates the short-term signal without indicating sustained interest.

The number of timeframe layers to stack depends on the target holding period of the trading strategy. Very short-term strategies targeting 30-minute to 4-hour holding periods benefit from 2-layer stacking across the 5-minute and 1-hour timeframes, providing noise reduction without introducing the lag that longer timeframe confirmation would impose. Medium-term strategies targeting 1-day to 7-day holding periods benefit from 3-layer stacking across the 1-hour, 12-hour, and 48-hour timeframes, ensuring that signals are supported at the granularity that matters for entry timing as well as the duration relevant to the holding period. Long-term position building benefits from stacking across 7-day, 30-day, and 90-day windows, which identifies tokens showing sustained directional momentum rather than cyclical patterns that repeat and reverse within the holding horizon.

Signal confirmation windows define the period over which a filter result is considered valid before requiring reconfirmation. Token conditions change continuously, and a filter result generated six hours ago may no longer reflect current conditions even when the underlying data has not been manually refreshed. Building confirmation window expiration into screening workflows ensures that trading decisions are based on filter results within a specified freshness threshold, and that stale results are automatically flagged for reconfirmation before capital allocation. For fast-moving meme tokens and newly launched assets, confirmation window expiration of 15 to 60 minutes is appropriate. For established tokens with slower-moving fundamentals, confirmation windows of 4 to 24 hours may be acceptable. Matching confirmation window length to the expected volatility of the target token category prevents the operational risk of acting on filter results that are technically current but practically stale relative to the speed of market movement in that segment.

When it comes to filtering tokens, picking the right tool can make a big difference. The best choice depends on your trading approach, as each tool has its own strengths. Here is a breakdown to help you decide.

Wallet Finder.ai specializes in wallet performance tracking across multiple DeFi networks. Its core filtering criteria cover profitability, win streaks, and consistency. It delivers Telegram alerts for wallet activities, supports blockchain data export, and allows users to connect and track personal wallets. Visual graphs and trading strategy analysis round out the feature set, along with custom watchlists and masked wallet exploration. It operates on a freemium model with Basic and Premium tiers, making it best suited for DeFi traders tracking profitable wallets.

Altfins is built around technical analysis and covers multiple cryptocurrencies. Its filtering draws on comprehensive technical indicators, with real-time alerts available via multiple channels. The platform supports portfolio tracking and uses AI-powered pattern recognition across multiple trading patterns and timeframes. Pricing is not publicly specified, and it is best suited for technical analysis enthusiasts.

Arkham Intelligence focuses on entity-based blockchain analysis across Ethereum and EVM-compatible networks. Its filtering surfaces entity relationships and whale tracking data, with frequent data updates. Transaction mapping and advanced analytics and visualization tools are core features, alongside an integrated intelligence exchange and chat-based analytics powered by its Oracle AI. Pricing is token-based using ARKM, making it the go-to for institutional researchers and whale watchers.

CoinGecko Screener provides general market data filtering with broad market coverage. Its filtering criteria center on market cap, volume, and price changes, delivered through standard market data updates. Export options are limited and portfolio features are basic, with a market overview analytics focus. It is available free with premium options and is best suited for general market screening and initial token discovery.

Wallet Finder.ai is built around wallet performance. It tracks profitability, win streaks, and consistency while delivering real-time alerts for wallet activities, making it the strongest choice for DeFi traders who want to monitor and replicate profitable wallets closely.

Altfins centers on technical analysis, offering AI-powered pattern recognition across a wide range of chart setups and indicators. It is the natural fit for traders who rely on technical signals rather than on-chain behavioral data to drive decisions.

Arkham Intelligence is designed for entity tracking and whale monitoring. Its AI engine and frequent data updates reveal large-scale market movements and fund flows that are invisible to standard on-chain tools, making it the preferred platform for institutional researchers.

CoinGecko Screener is the most accessible starting point for initial token research. It provides a broad market overview using market cap, volume, and price change metrics, offering a straightforward foundation before moving to more specialized tools.

Each platform occupies a distinct niche covering wallet performance, technical indicators, entity tracking, and market fundamentals respectively. Depending on your strategy, using one as a primary tool and layering another for confirmation can provide a more complete picture than any single platform alone.

Selecting the ideal token filtering tool depends on your trading style and what you aim to achieve. While we've covered an overview of tools, the right choice comes down to your specific needs. For example, Wallet Finder.ai stands out for its advanced wallet tracking features, which we discussed earlier.

Start by identifying your main goal. If detailed wallet tracking is your priority, Wallet Finder.ai might be a great fit. It filters wallets based on factors like profitability, consistency, and win streaks, and even sends real-time alerts when key wallets make moves. This targeted approach can be more precise than general market screening tools.

Next, think about the depth of analysis you require. Some tools focus on technical analysis, offering features like AI-driven pattern recognition for chart trends. Others prioritize broader market indicators or tracking large-scale activity. Decide whether you need in-depth technical signals or a wider market overview to guide your trades.

Consider your budget and how actively you plan to use the tool. Wallet Finder.ai, for instance, offers a freemium model, allowing you to analyze your wallet's performance and explore masked DeFi wallets without spending a dime upfront. Many tools provide free basic features, which are perfect for beginners or casual users who might later opt for premium upgrades.

You’ll also want to check if the tool supports the blockchain networks you’re trading on. For instance, Wallet Finder.ai focuses on popular DeFi networks like Ethereum and other EVM-compatible platforms, ensuring its insights align with your trading environment.

Look for tools with strong data export options. If you need to perform custom analyses or combine data from multiple sources, this feature is essential. Wallet Finder.ai, for example, allows you to export blockchain data, giving you the flexibility to dig deeper offline.

Sometimes, using multiple tools can provide a more complete picture. You might start with a general screening tool to spot market trends, then switch to a specialized platform like Wallet Finder.ai to track high-performing wallets. Combining these insights with other analytics can help refine your strategy even further.

The article covers filtering by market performance metrics including volume, wallet activity, and price change but does not address the security and contract analysis filtering that experienced token traders apply before any performance-based filtering begins. Contract-level security filtering is the pre-screening layer that eliminates tokens with programmatic mechanisms for theft, value extraction, or trading restriction embedded in their smart contract code before any time is invested in analyzing market signals or wallet behavior. Applying performance filters to tokens that have not passed contract security screening produces results contaminated by honeypots, exit scam setups, and tokens with hidden administrative controls that can destroy position value regardless of how strong the market signal appears.

The practical importance of security pre-screening has increased substantially as token launch infrastructure has made it trivial to deploy new tokens with sophisticated-appearing contract code that contains hidden malicious functionality. Solidity and other EVM smart contract languages allow contract developers to implement fee-on-transfer mechanisms that can be set to any percentage including 100%, mint functions that allow unlimited token creation after launch, ownership transfer functions that enable replacement of the original deployer with a new malicious owner, and trading restriction functions that can disable all selling by non-whitelisted addresses. Each of these mechanisms can be implemented in ways that are not visible in the token's basic metadata and require actual contract bytecode analysis to detect. Token filtering platforms that do not surface contract security flags as first-order filtering criteria force traders to perform this analysis manually or accept the risk of encountering these mechanisms after entry.

Automated contract security analysis tools evaluate EVM-compatible smart contract bytecode for known patterns associated with malicious or high-risk functionality without requiring the analyst to manually read Solidity code. These tools operate by comparing contract bytecode and function signatures against databases of known malicious patterns, by simulating buy and sell transactions to detect asymmetric execution outcomes, and by analyzing ownership and administrative function structures to identify centralization risks.

Honeypot simulation is the most direct security check, executing a simulated buy and sell sequence against the contract to verify that tokens purchased can be subsequently sold without encountering programmatic restrictions. A contract that allows buying but blocks selling through any mechanism, whether an explicit trading restriction function or a 100% fee-on-transfer applied only to sell transactions, is classified as a honeypot regardless of how legitimate its presentation appears. Honeypot simulation catches the most common and most damaging form of token scam and should be the first filter applied to any token discovered through performance-based screening. Tools like Token Sniffer, Honeypot.is, and several DEX screeners including Dextools and Dexscreener provide automated honeypot simulation as a free utility that takes seconds to execute.

Mint function analysis identifies whether the contract contains functions allowing post-deployment token minting, which represents an unlimited dilution risk for token holders. Contracts with unrestricted mint functions controlled by the deployer address can have their token supply increased arbitrarily at any time, which proportionally dilutes the value of existing holdings. Some mint functions are legitimately used for protocol mechanisms like yield rewards and should be evaluated in context rather than automatically disqualifying, but mint functions controlled by a single externally owned account with no governance restrictions are high-risk regardless of the stated purpose because they rely entirely on the deployer's continued honest behavior with no programmatic constraint.

Ownership renunciation and administrative control mapping determines whether the contract deployer retains privileged administrative capabilities that can alter contract behavior after deployment. Fully renounced contracts, where ownership has been transferred to the null address, cannot be modified by any party and provide the strongest immutability guarantee. Contracts with retained ownership require evaluating what specific capabilities the owner retains: an owner who can only update a treasury fee split from 2% to 3% represents meaningfully less risk than an owner who can pause all trading, modify fee-on-transfer percentages to any value, or add or remove addresses from whitelist restrictions. Privileged function enumeration systematically catalogs all functions accessible only to the owner address so the risk scope is clearly defined rather than assumed.

Liquidity lock verification confirms whether the liquidity pool tokens representing the pairing of a new token with a base asset like ETH or USDC are locked in a time-locked contract that prevents removal by the deployer for a specified period. Unlocked liquidity represents the most common vector for rug pull exits, where a deployer removes all liquidity from the trading pool simultaneously, collapsing the token price to near zero while retaining the base assets. Liquidity lock verification queries lock contract addresses across major locking platforms including Unicrypt, Team Finance, and Mudra to confirm that liquidity pool tokens are locked, the lock expiration is sufficiently far in the future relative to the intended holding period, and the locked amount represents a meaningful proportion of total pool liquidity rather than a small percentage locked to create a false appearance of security while the majority remains unlockable.

Lock duration adequacy requires contextual interpretation rather than a single pass-fail threshold. A 30-day liquidity lock provides minimal rug pull protection for a token that represents itself as a long-term project because the deployer can exit after 30 days with no consequence. A 30-day lock provides more meaningful protection for a meme token expected to complete its price cycle within days. Calibrating the minimum acceptable lock duration to the expected holding period and the project's stated timeline creates a more useful screening criterion than applying a uniform lock duration requirement across all token categories.

Wallet concentration analysis as a rug pull resistance indicator examines the distribution of token supply across holder addresses. Tokens where the top 10 holder addresses collectively control more than 30 to 40 percent of circulating supply face elevated coordinated selling risk regardless of whether the concentration is in developer wallets, early investor allocations, or undisclosed insider positions. High concentration creates the conditions for cascading price collapse when concentrated holders reduce positions simultaneously, whether through coordination or simply through individual rational exit decisions during price weakness. Deployer wallet holding percentage is the most critical single concentration metric because deployer wallets with large retained token allocations represent both direct price risk from selling and a signal that the token economics were structured to benefit the deployer disproportionately.

Integrating these contract security and liquidity risk filters as the mandatory first stage of any token screening workflow transforms the quality of subsequent performance-based analysis by ensuring that the tokens reaching the signal evaluation stage have cleared the baseline structural integrity requirements that determine whether positive performance signals can actually be acted upon safely.

Wallet Finder.ai shines by offering real-time wallet tracking, detailed performance analytics, and personalized market alerts based on wallet activity. You can link your own wallets to keep an eye on performance, study trading patterns, and uncover insights that fit your specific goals.

The platform’s intuitive tools let you filter data, export important findings, and stay informed about major market shifts - all within a single, easy-to-use space designed to support smarter decisions in the blockchain world.

When choosing a token filtering tool, it's important to prioritize features that align with your trading goals. Look for tools that let you tailor filters to match your strategy. For example, options to sort tokens by trading volume, market cap, or wallet activity can help you zero in on assets that fit your approach.

Having access to real-time data analysis and the ability to set custom alerts for major market shifts is essential in fast-moving markets. Additionally, tools that integrate smoothly with your current trading platforms can save time and make it easier to carry out precise strategies while keeping a close eye on performance.

Real-time alerts from Wallet Finder.ai keep traders in the loop with instant updates about important market changes and wallet activities. These updates let you act fast when new opportunities pop up, helping you stay ahead in the ever-changing trading world.

The platform tracks major trading trends and wallet interactions, making sure you’re always up to speed on potential profit-making trades. This means you can make well-timed, data-backed choices to fine-tune your trading strategies.

High signal-to-noise filtering requires three structural decisions that most traders do not make explicitly. The first is filter sequencing: applying exclusion filters that eliminate disqualifying tokens before running positive-signal inclusion filters dramatically reduces the candidate set before expensive signal computations execute, which both speeds up the screening process and reduces false positives by ensuring that low-quality tokens are removed before any performance metrics are evaluated. Begin every screening workflow with contract security exclusions and minimum liquidity floors, then apply positive signal criteria to the cleaned candidate set.

The second is dynamic threshold calibration: static absolute thresholds for metrics like daily trading volume become miscalibrated as overall market conditions change, filtering out too many tokens during contractions and too few during expansions. Percentile-based thresholds that set cutoffs relative to the current distribution across all tokens in a size tier automatically adjust to prevailing market conditions, producing more consistent selectivity across different environments. The third is multi-timeframe confirmation: requiring that a signal criterion be satisfied across two or more time windows simultaneously rather than in a single window eliminates tokens experiencing brief anomalous spikes that do not represent sustained conditions. For medium-term strategies, requiring alignment across 1-hour, 12-hour, and 48-hour windows reduces the false positive rate substantially compared to single-window screening. Combining these three structural choices with a sensitivity analysis pass that tests how results change when each major threshold is adjusted plus or minus 20% confirms that the chosen calibration is robust rather than fragile at a noise-signal boundary.

Contract security pre-screening should precede all market performance filtering because performance signals are irrelevant for tokens with programmatic mechanisms that prevent safe exit or enable unlimited supply dilution. Four checks cover the highest-risk failure modes. Honeypot simulation executes a simulated buy and sell sequence to verify that tokens can be sold without encountering programmatic selling restrictions or asymmetric fee structures that apply 100% fees to sells while allowing buys. Free utilities including Honeypot.is and DEX screener integrations make this check instantaneous and it should be the absolute first filter applied to any newly discovered token.

Mint function analysis identifies whether post-deployment token creation is possible through functions controlled by the deployer or any other address, which represents unlimited dilution risk with no upper bound. Contracts with mint functions controlled by a single externally owned account with no governance restriction should be treated as high-risk regardless of stated purpose. Ownership and privileged function enumeration maps what capabilities the contract owner retains, distinguishing contracts where ownership has been fully renounced to the null address from contracts where the owner can pause trading, modify fee percentages, or restrict selling by address. Liquidity lock verification confirms that pool liquidity tokens are locked in a time-locked contract for a duration adequate to the intended holding period, with the locked amount representing a meaningful proportion of total pool liquidity. Tokens failing any of these four checks should be excluded from further analysis regardless of how compelling their market performance metrics appear, because the structural risks they represent can destroy position value instantaneously regardless of signal quality.