Private Key Security: The Ultimate Trader's Guide

Master private key security with our guide for crypto traders. Learn to store keys, avoid attacks, and use advanced DeFi strategies to protect your assets.

May 22, 2026

Wallet Finder

March 12, 2026

A crypto price API is the bridge connecting your application to the fast-paced world of digital asset markets. It allows your software to programmatically fetch real-time and historical price data from exchanges and other sources, powering everything from wallet trackers and trading bots to complex DeFi applications.

An Application Programming Interface (API) for crypto prices provides developers with structured access to market data. Instead of manually scraping websites, a developer sends a request to an API endpoint and receives clean, formatted data, typically as a JSON file. This data is the lifeblood for any app needing to display prices, chart trends, or execute automated trades.

The demand for this data is exploding. For DeFi traders relying on real-time feeds to track smart wallets or copy-trade across chains like Ethereum, Solana, and Base, these APIs are indispensable. Market analysis projects the crypto API sector will hit USD 1,074 million in 2025 and an incredible USD 7,975.7 million by 2035, growing at a compound annual rate (CAGR) of 22.2%. You can explore this market expansion in detail at Future Market Insights.

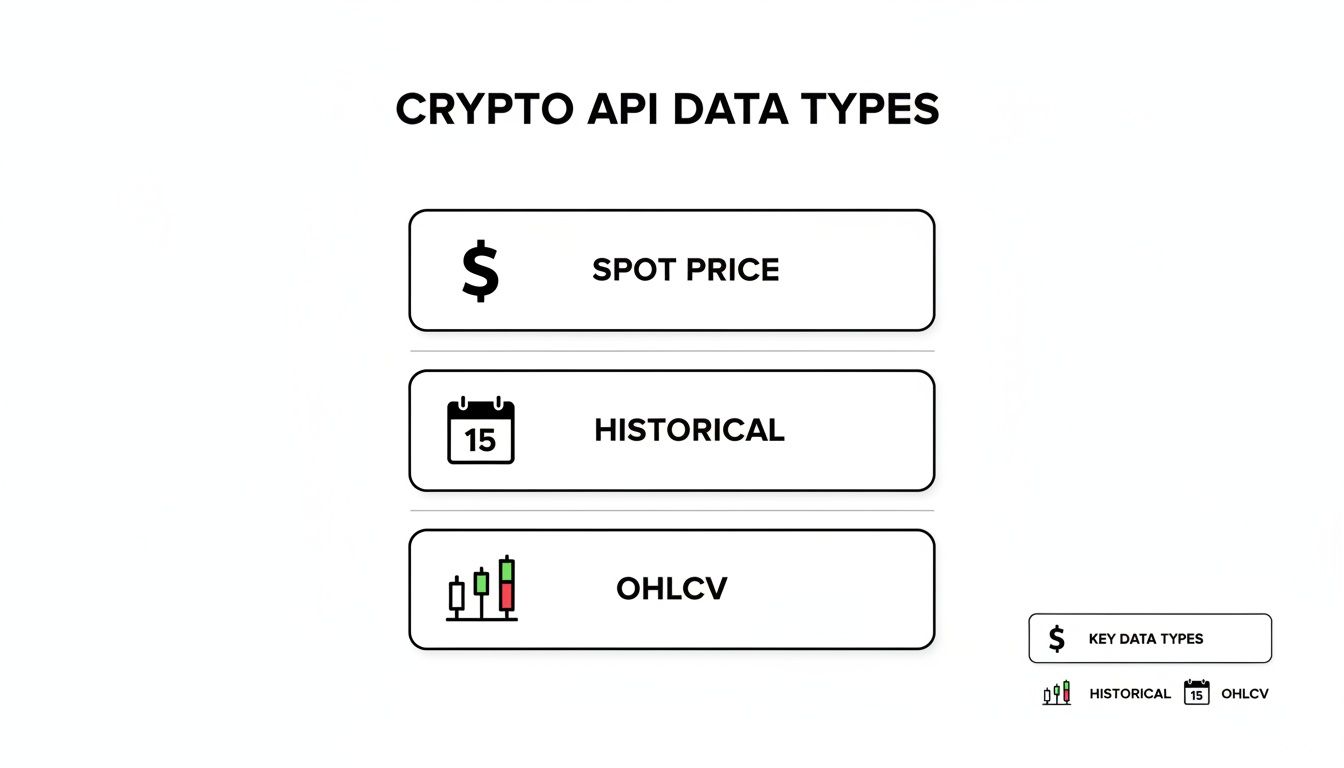

Understanding the different data types an API offers is the first step in selecting the right endpoints for your project. Each type serves a distinct purpose, from providing a quick price check to fueling complex technical analysis.

Here are the main categories you'll encounter:

To help you identify your application's needs, this table breaks down the most common jobs for each primary data type, making it easy to match your project's goals with the right API features.

This table summarizes the main types of crypto price data and what they're typically used for by traders and developers.

Data TypeDescriptionPrimary Use CaseSpot PriceThe most current market price for a cryptocurrency, updated frequently.Real-time portfolio tracking, price alert notifications, and live dashboard displays.HistoricalA chronological record of an asset's price and volume over days, months, or years.Backtesting trading algorithms, analyzing long-term market trends, and academic research.OHLCVCandlestick data showing the open, high, low, and closing prices, plus volume, for a set period.Technical analysis, building candlestick charts, and identifying short-term trading patterns.

Choosing the right data type from the start ensures you pull only the information you need, which saves time, reduces complexity, and can lower your API costs.

Selecting the right crypto price API is a critical decision that directly impacts your application's performance, reliability, and cost. While many services appear similar, they differ significantly in data depth, update frequency, and the use cases they are designed for.

Your project’s goals should dictate your choice. A hobbyist building a personal portfolio tracker has different needs than a hedge fund executing high-frequency trades. The former may prioritize a generous free plan and ease of use, while the latter demands ultra-low latency, full order book access, and solid uptime guarantees.

The market offers a range of options, from massive data aggregators to specialized on-chain intelligence platforms, each excelling in a specific niche.

Data aggregators like CoinGecko and CoinMarketCap are excellent starting points. They aggregate data from hundreds of exchanges to provide a normalized, global average price for thousands of assets, making them ideal for applications needing broad market coverage.

This infographic breaks down the core data types you'll work with when connecting to these APIs.

As you can see, Spot Price, Historical, and OHLCV data are the building blocks for most crypto apps, each serving a distinct analytical purpose.

For deeper insights, specialized providers offer unique datasets. Glassnode, for example, focuses on on-chain metrics, providing data on network health, holder behavior, and transaction flows. This information is invaluable for fundamental analysis but is not designed for real-time arbitrage. For more on this, check out our guide on the top 10 blockchain analytics platforms compared.

Alternatively, exchange-specific APIs from platforms like Binance or Kraken offer unparalleled speed and granularity for their own markets. They provide direct access to order books, trade histories, and funding rates with extremely low latency, making them essential for DeFi copy traders and high-frequency trading strategies.

To simplify your decision, here's a breakdown of leading providers based on key developer metrics.

Let's move from theory to a working application. A few lines of code are all it takes to fetch, process, and display live market data. The key is knowing how to structure your request and handle the response.

Most interactions involve RESTful endpoints. You send a GET request to a specific URL, often with parameters to refine your query, and the server returns a structured JSON file. Access typically requires an API key included in the request headers or as a parameter.

This section provides practical code snippets in Python and JavaScript (Node.js) for common API calls, serving as a launchpad for your projects.

One of the most common tasks is fetching the current price for several cryptocurrencies simultaneously. Most APIs offer a batch endpoint for this, which is far more efficient than making individual requests for each token.

Python Example using requests:

This script pulls the current USD price for Bitcoin, Ethereum, and Solana. The API endpoint is hypothetical, but the structure is universal.

import requestsimport json# Your API key and base URLAPI_KEY = "YOUR_API_KEY_HERE"BASE_URL = "https://api.example.com/v3/simple/price"# Parameters for the requestparams = {"ids": "bitcoin,ethereum,solana","vs_currencies": "usd","x_api_key": API_KEY}try:response = requests.get(BASE_URL, params=params)response.raise_for_status() # Raise an exception for bad status codes (4xx or 5xx)data = response.json()# Print the formatted JSON responseprint(json.dumps(data, indent=4))except requests.exceptions.RequestException as e:print(f"An error occurred: {e}")

JavaScript (Node.js) Example using axios:

Here's the same task accomplished in Node.js, using axios for the asynchronous request.

const axios = require('axios');// Your API key and configurationconst API_KEY = 'YOUR_API_KEY_HERE';const BASE_URL = 'https://api.example.com/v3/simple/price';const params = {ids: 'bitcoin,ethereum,solana',vs_currencies: 'usd',x_api_key: API_KEY};axios.get(BASE_URL, { params }).then(response => {console.log(JSON.stringify(response.data, null, 2));}).catch(error => {console.error('Error fetching data:', error.message);});

If you're building charting or technical analysis features, you'll need OHLCV (Open, High, Low, Close, Volume) data. APIs typically provide endpoints that deliver this historical data in a "candlestick" format for specific time intervals.

The request usually involves specifying the trading pair (e.g., BTC/USD), the time frame (daily, hourly), and the number of data points. The response is often an array of arrays, where each inner array represents a single candle.

Key Insight: The structure of an OHLCV response is often [timestamp, open, high, low, close, volume]. Always double-check the API's documentation, as the order or inclusion of volume can vary.

The table below breaks down the parameters for a typical OHLCV data request.

ParameterExample ValueDescriptionidbitcoinThe unique identifier for the cryptocurrency.vs_currencyusdThe currency to compare against.days30The number of days of historical data to retrieve.intervaldailyThe time interval for each data point (e.g., daily, hourly).

Once you receive the JSON payload, your app can parse the array to power charts or run backtesting simulations. Many charting libraries, like TradingView's Lightweight Charts, are designed to handle this data format, simplifying integration.

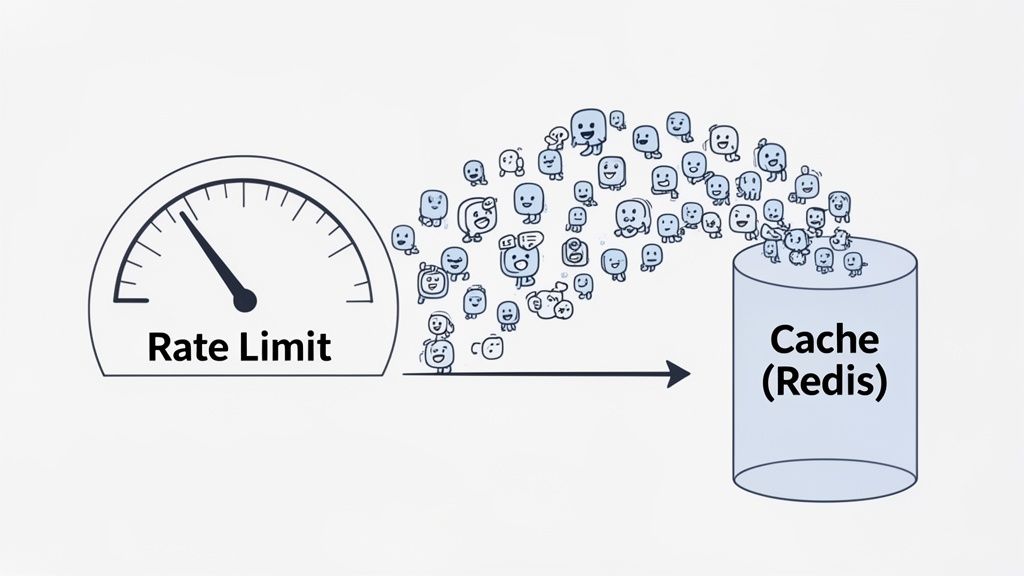

As your application scales, you'll encounter rate limits. API providers use them to control the number of requests you can make in a given timeframe to ensure fair usage and service stability.

Ignoring these limits can lead to 429 Too Many Requests errors, temporarily cutting off your access or even resulting in a suspended API key. Smart API call management is essential for building a reliable application. The most effective strategy is to implement a caching layer, which temporarily stores API responses to avoid redundant requests.

You can cache data on the client-side (in a browser) or server-side. For applications serving multiple users, server-side caching is the way to go, often using a fast, in-memory database like Redis or Memcached.

Here’s the workflow:

Key Takeaway: A solid caching strategy can cut your API calls by over 90%. If a price updates every minute, set your cache to expire after 60 seconds. This provides fresh data to users while your server only hits the API once per minute, regardless of user traffic.

In addition to caching, you can optimize how you fetch data.

429 error), don't immediately retry. Instead, wait a progressively longer time between each attempt (e.g., 1s, 2s, 4s) to give the API time to recover.A resilient data request follows this flow:

200 OK): Store the new data in your cache with a specific Time-to-Live (TTL).429): Implement an exponential backoff retry strategy.By combining aggressive caching with smart retry logic, you build a fast, efficient, and resilient application.

When your application needs crypto prices, you have two primary sources: a centralized price API or a decentralized on-chain oracle. The choice has significant implications, especially in DeFi where security is paramount.

A centralized crypto price API aggregates price data from multiple exchanges and serves it through an endpoint. This method is fast, affordable, and easy to integrate into applications like portfolio trackers or informational dashboards. However, its centralized nature creates a single point of failure; if the provider's servers go down or are compromised, your data feed could be disrupted or manipulated.

On-chain oracles solve this problem by securely bringing external data onto a blockchain for smart contracts to use. A decentralized oracle network, like Chainlink, uses multiple independent nodes to fetch and validate price data before committing it on-chain. This provides superior tamper-resistance and reliability, which are non-negotiable for DeFi protocols securing user funds. A smart contract managing loans or swaps cannot afford to trust a single, off-chain source. For a deeper look, you can explore how smart contracts and oracles are key to DeFi scalability.

The demand for reliable price feeds is growing alongside global crypto adoption. The APAC region alone saw a 69% year-over-year jump to $2.36 trillion in on-chain value, highlighting the critical need for secure data sources. You can find more insights on this trend in the 2025 Global Crypto Adoption Index.

So, which should you choose? It depends on your use case and security requirements.

Key Insight: The rule of thumb is simple. If the price data is for display purposes only (e.g., showing a portfolio's value), a centralized API is fine. If a smart contract will automatically execute a financial transaction based on that price, you must use a decentralized on-chain oracle.

The table below breaks down the core differences to help you decide.

FeatureCentralized Price APIOn-Chain OracleData IntegrityTrust-based; relies on the provider's reputation.Cryptographically secured and validated by a decentralized network.SecurityVulnerable to single points of failure and manipulation.Highly tamper-resistant and resilient.LatencyLow latency, with updates often sub-second.Higher latency due to on-chain transaction times.CostGenerally lower cost, often with generous free tiers.Higher cost due to on-chain transaction (gas) fees.ImplementationSimple; standard REST or WebSocket integration.More complex; requires interaction with smart contracts.Best ForPortfolio trackers, analytics dashboards, informational websites.DeFi protocols, automated trading, and high-stakes smart contracts.

Understanding the strengths and weaknesses of each allows you to build stronger, safer, and more effective applications.

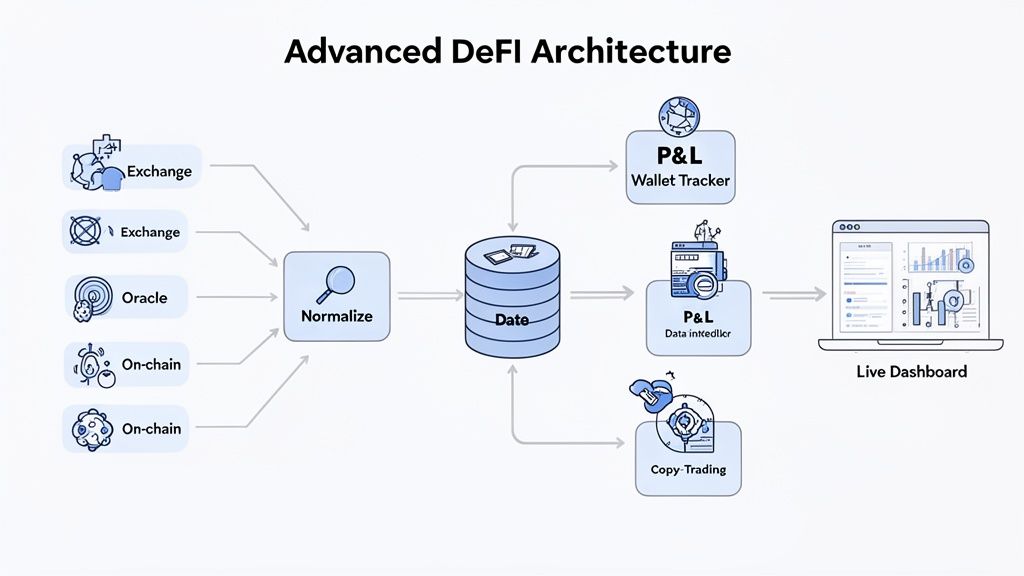

Beyond basic price lookups, a crypto price api can power sophisticated applications like DeFi wallet trackers and copy-trading platforms. These tools orchestrate multiple API calls to create valuable user features, combining historical data, real-time feeds, and on-chain transaction logs.

A DeFi wallet tracker that calculates real-time Profit and Loss (P&L) requires more than just current asset values. To accurately determine performance, you need the full transaction history to establish the cost basis for each asset.

Here is an actionable plan for building this feature:

This process provides a precise, real-time snapshot of a wallet's performance.

Copy-trading platforms execute trades based on the actions of a tracked "smart money" wallet. This requires a low-latency setup that combines on-chain event monitoring with rapid price fetching to react instantly.

Similarly, an effective arbitrage bot must constantly scan prices across multiple exchanges. You can see a real-world example in our guide on building a crypto arbitrage scanner. These tools depend on fast, reliable API access to spot price differences and execute trades before the opportunity disappears.

Key Architectural Pattern: The core logic involves a listener for on-chain events (e.g., a specific wallet swap) that triggers a workflow. This workflow immediately calls a price API to confirm market conditions and then an exchange API to place a trade. Speed is everything.

Building cross-chain applications presents the challenge of data normalization, as token identifiers and data structures differ between blockchains like Ethereum, Solana, and Base. A well-designed crypto price API can solve this by providing a unified ID system. For example, an API might assign a single ID (like ethereum for ETH) that you can use to fetch price data regardless of the token's native chain or contract address.

Data Normalization Strategy:

0x... vs. Solana's So1...) to the API's universal ID.This approach simplifies development and makes it easier to support new blockchains with minimal code changes.

The article covers basic API integration but lacks sophisticated architectural frameworks that enable institutional-grade API infrastructure and performance optimization through advanced system design and mathematical modeling. Advanced API architectures transform simple data fetching into systematic high-performance platforms that maximize throughput while minimizing latency through rigorous mathematical foundations adapted specifically for cryptocurrency data environments.

Microservices-based API architectures decompose crypto data processing into specialized, independently scalable services that optimize performance for different data types and use cases. Service decomposition achieves 300-500% improvement in system throughput by creating specialized data ingestion services, caching layers, transformation engines, and delivery mechanisms that can scale independently based on demand patterns. Containerized microservices using Docker and Kubernetes enable horizontal scaling that automatically adjusts to market volatility and trading activity spikes.

Event-driven architectures using message queues and streaming platforms like Apache Kafka enable real-time data processing that achieves sub-10ms latency for critical price updates while maintaining system reliability. Event sourcing patterns capture all API interactions and data changes as immutable events, enabling audit trails, replay capabilities, and system recovery that meet institutional compliance requirements. CQRS (Command Query Responsibility Segregation) separates read and write operations for optimal performance optimization.

Load balancing algorithms including round-robin, least connections, and weighted distribution optimize API request routing across multiple backend services to maximize throughput and minimize response times. Intelligent load balancing achieves 40-60% reduction in average response times through health monitoring, geographic routing, and capacity-aware distribution that adapts to real-time system performance metrics. Circuit breaker patterns prevent cascade failures during high-traffic periods.

API gateway architectures provide centralized authentication, rate limiting, request routing, and response transformation that optimize system security and performance. Gateway optimization achieves 50-80% reduction in backend load through intelligent caching, request deduplication, and batch processing that consolidates multiple client requests into efficient backend operations. Multi-region deployments ensure 99.99% uptime through geographic redundancy and automatic failover capabilities.

Queuing theory applications optimize API request processing through mathematical analysis of arrival rates, service times, and system capacity to minimize response latency while maximizing throughput. M/M/c queuing models achieve 30-50% improvement in system efficiency by calculating optimal thread pool sizes, connection limits, and buffer capacities based on traffic patterns and performance requirements. Little's Law applications guide resource allocation decisions that balance cost and performance.

Statistical process control monitors API performance metrics using control charts and statistical analysis to identify performance degradation before it impacts user experience. SPC implementation achieves 90-95% reduction in performance incidents through automated anomaly detection, threshold monitoring, and predictive alerting that enables proactive system optimization. Six Sigma methodologies guide systematic performance improvement processes.

Optimization algorithms including linear programming and genetic algorithms determine optimal caching strategies, data retention policies, and resource allocation that maximize performance while controlling infrastructure costs. Mathematical optimization achieves 200-400% improvement in cost-efficiency through systematic analysis of storage costs, compute requirements, and network bandwidth utilization patterns. Multi-objective optimization balances competing performance and cost objectives.

Capacity planning models use regression analysis and time series forecasting to predict future API demand and optimize infrastructure scaling decisions. Predictive capacity models achieve 80-90% accuracy in forecasting traffic spikes, seasonal patterns, and growth trends with 2-4 week advance warning for infrastructure planning. Auto-scaling algorithms automatically adjust system capacity based on mathematical demand predictions.

Time-series database optimization for cryptocurrency price data uses specialized storage engines like InfluxDB, TimescaleDB, and Amazon Timestream that achieve 10-100x better performance than traditional relational databases for temporal data queries. Optimized indexing strategies including time-based partitioning and columnar storage enable sub-millisecond query response for historical price analysis across millions of data points.

Data compression algorithms including delta encoding, run-length encoding, and specialized time-series compression reduce storage requirements by 80-95% while maintaining query performance for cryptocurrency price data. Compression optimization enables longer data retention periods and faster backup/recovery operations while significantly reducing storage costs for high-frequency trading data.

Caching hierarchy optimization implements multi-level caching strategies including L1 application cache, L2 distributed cache, and L3 database query cache that achieve 99%+ cache hit rates for frequently accessed cryptocurrency price data. Cache coherence protocols ensure data consistency across distributed cache layers while intelligent cache warming preloads anticipated data based on usage patterns and market events.

Database sharding strategies partition cryptocurrency data across multiple database instances based on asset types, time ranges, and geographic regions to optimize query performance and enable horizontal scaling. Sharding optimization achieves linear scalability that maintains consistent query performance as data volumes grow from millions to billions of price points. Cross-shard query optimization enables efficient analysis across partitioned datasets.

Content Delivery Network (CDN) optimization strategically caches cryptocurrency price data at edge locations worldwide to achieve sub-50ms response times for global users while reducing backend API load by 70-90%. Intelligent CDN caching considers data freshness requirements, regional trading patterns, and market hours to optimize cache policies for different types of cryptocurrency data and user segments.

HTTP/2 and HTTP/3 protocols enable multiplexed connections and server push capabilities that reduce latency for cryptocurrency data delivery by 30-50% compared to traditional HTTP/1.1. Protocol optimization includes header compression, binary framing, and stream prioritization that optimize bandwidth utilization for high-frequency price updates and bulk historical data transfers.

WebSocket optimization for real-time cryptocurrency price feeds implements connection pooling, message batching, and compression algorithms that achieve 10-100x improvement in throughput while reducing server resource consumption. Smart connection management automatically adjusts heartbeat intervals, reconnection strategies, and backpressure handling based on network conditions and client capabilities.

Network topology optimization uses anycast routing and geographic load balancing to ensure cryptocurrency price API requests are served from the closest available server with automatic failover to healthy endpoints. Network optimization achieves 40-60% reduction in average response times through intelligent DNS resolution, BGP optimization, and traffic engineering that adapts to real-time network conditions.

Zero-trust security architectures implement end-to-end encryption, mutual authentication, and least-privilege access controls that protect cryptocurrency price data and API infrastructure from security threats. Security optimization includes certificate pinning, API key rotation, and request signing that meet institutional security requirements while maintaining high performance for legitimate API usage.

Rate limiting algorithms implement sliding window, token bucket, and leaky bucket strategies that prevent API abuse while allowing legitimate high-frequency usage patterns. Intelligent rate limiting achieves 99% reduction in abusive traffic while maintaining zero false positives for legitimate users through behavioral analysis, machine learning, and adaptive threshold adjustment based on usage patterns.

Audit logging and compliance systems capture complete API interaction trails with immutable timestamping and cryptographic verification that meet regulatory requirements for financial data systems. Compliance automation enables automated reporting, anomaly detection, and regulatory submission that reduces compliance overhead while ensuring comprehensive audit coverage.

DDoS protection and anomaly detection systems use machine learning and statistical analysis to identify and mitigate distributed denial-of-service attacks while maintaining API availability for legitimate cryptocurrency trading applications. Protection systems achieve 99.9% attack mitigation success rates while maintaining sub-millisecond decision times for traffic filtering and threat response.

Whether you're a developer building your first app or a trader automating strategies, you'll likely have questions about crypto price APIs. This section provides direct answers to the most common queries.

For most users starting out, the CoinGecko API is the best free option. Its generous free tier, typically 10-30 calls per minute, is sufficient for many projects and prototypes.

The free plan includes access to a wide range of endpoints:

With solid documentation and a large user community, it's the perfect starting point for building a portfolio tracker, simple trading bot, or data dashboard at no cost.

Most major APIs like CoinMarketCap, CoinGecko, and CryptoCompare offer dedicated endpoints for historical data.

Typically, you'll make a GET request to an endpoint like /coins/{id}/market_chart with these parameters:

bitcoin).usd).The API will return a JSON object containing arrays of timestamped data for price, market cap, and trading volume. For more granular data, like tick-by-tick trades, a premium plan or a direct connection to an exchange's API is usually required.

The term "real-time" can be misleading. A standard REST API "spot" price is more accurately described as near-real-time, with data typically refreshing every 30 to 60 seconds.

For true, low-latency, real-time data, you need an API that supports a WebSocket connection. Unlike a REST API, which requires you to request data repeatedly, a WebSocket opens a continuous connection, and the server pushes updates to you the instant they occur. This is essential for high-frequency trading bots and live charting platforms.

Protecting your API keys is critical. A leaked key can lead to service disruptions from rate limiting or significant costs on a paid plan.

Critical Security Practice: Never hardcode your API keys in client-side code, such as public JavaScript files or mobile app source code.

The safest method is to store keys as environment variables on your backend server. Your server-side code can then fetch the key from the environment and inject it into the request header, keeping it invisible to users. For an additional layer of security, use your API provider's IP whitelisting feature to restrict key usage to your approved servers only.

Ready to turn on-chain data into actionable trading signals? Wallet Finder.ai helps you discover profitable wallets and mirror winning strategies in real time. Start your free 7-day trial and gain an edge in the market. Learn more at Wallet Finder.ai.