Chinese Crypto Coins 2026: Insights & Top Projects

Discover top Chinese crypto coins like NEO, VeChain, & Conflux. Learn about regulations, key projects, and tracking smart money with Wallet Finder.ai.

May 25, 2026

Wallet Finder

April 10, 2026

You’re probably in one of two states right now. Either you have a wallet tracker half-working with a mix of RPC calls, token lists, and price endpoints from different providers, or you already know the hard part is not fetching data once, it’s keeping it correct across chains, contracts, pools, and refresh cycles.

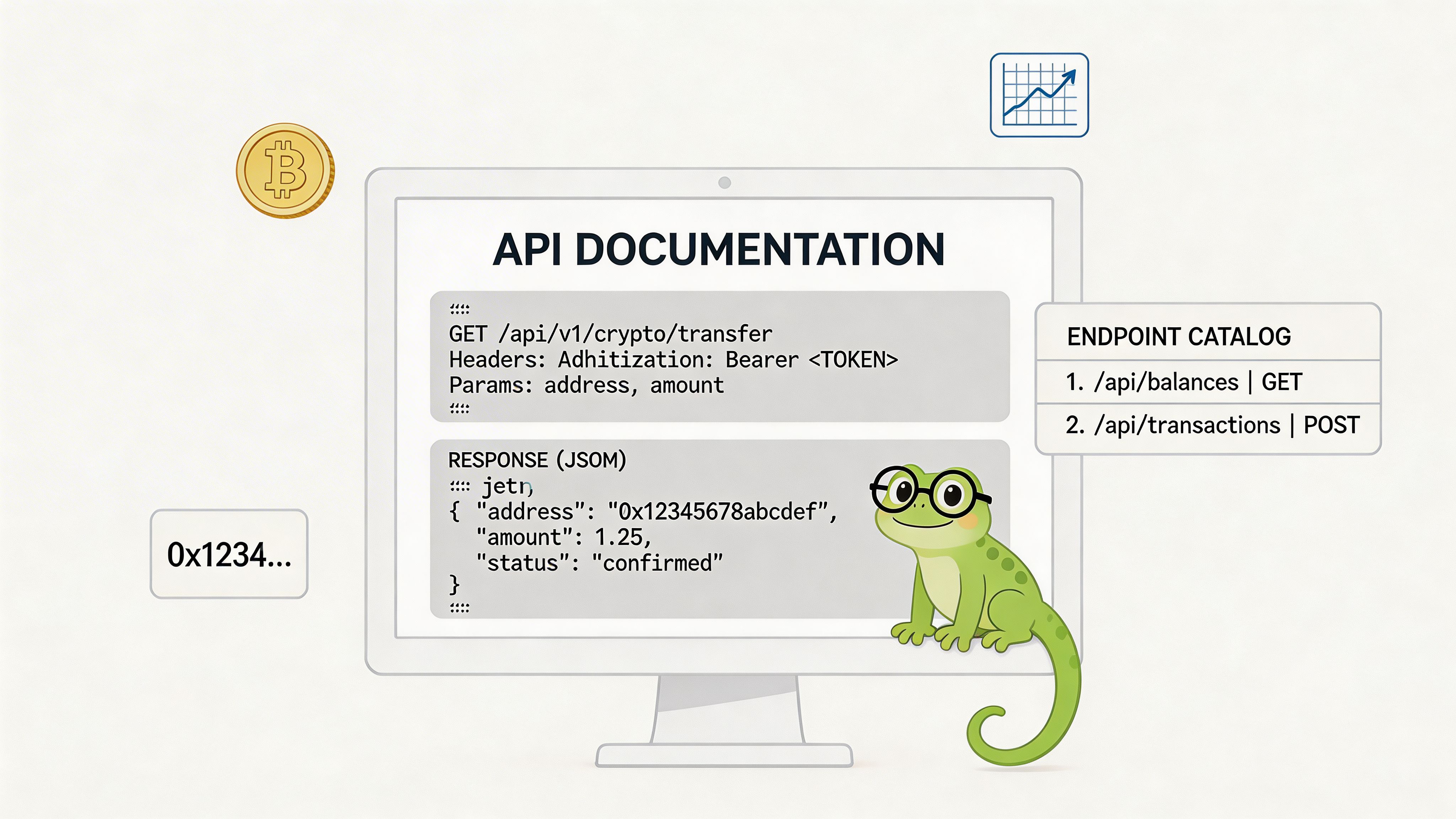

That is where coingecko api documentation matters. Not as marketing material, but as the operating manual for a system you will lean on every minute your app is live.

Most junior builds fail in the same places. Token identity drifts across chains. Contract addresses do not map cleanly to a canonical asset. Price refreshes burn through request budgets. On-chain pool data is fetched too late or too often. A dashboard looks fine in development and falls apart when you add active users, historical charts, or PnL views.

If you are building a DeFi wallet tracker, treat CoinGecko as a data layer, not just a price API. The useful part is how its market, metadata, historical, and on-chain endpoints fit together.

A user connects a wallet that holds bridged USDC on Arbitrum, a memecoin on Base, an LP position priced off a thin pool, and a governance token that migrated contracts six months ago. If your tracker cannot resolve those assets to stable identifiers and attach the right price source fast, every downstream metric is wrong. Balance views drift, PnL misfires, and wallet-level analytics stop being useful.

For high-frequency DeFi tracking, CoinGecko works best as a coordination layer between market data, token metadata, and on-chain DEX context. The official docs cover the endpoints. The practical work is deciding which endpoint owns each part of your pipeline, how often to refresh it, and what to cache so you do not waste request budget. If you are designing around request pressure already, this guide on handling API rate limits in production wallet trackers is worth reading alongside your schema design.

CoinGecko covers the mix most wallet trackers need in one integration surface: centralized market data, token metadata, historical pricing, and GeckoTerminal-backed on-chain DEX data. That matters less for a basic watchlist and more for a wallet analytics system that has to map contracts across chains, backfill charts, and reconcile spot prices against pool activity without stitching together four vendors.

The first version usually breaks on data modeling, not UI.

Teams building wallet trackers for the first time often key everything off token symbols, then patch exceptions later. That fails as soon as the same symbol exists on multiple chains, a token migrates contracts, or a wrapped asset needs different handling from its native counterpart. Treat symbols as display fields only.

A cleaner model uses a canonical asset record with a few fields that stay stable under load:

That structure pays off when you calculate wallet PnL every minute. You can price majors from simple market endpoints, fall back to contract resolution when users paste addresses, and route illiquid assets to on-chain pool data instead of pretending every token has a clean centralized market price.

The coingecko api documentation is the operating manual for this system. The value is not the endpoint list by itself. The value is knowing which endpoint should be authoritative for each job.

Use lightweight price endpoints for live portfolio views. Use metadata and contract lookup endpoints during indexing and token normalization. Use historical endpoints for chart rendering and backfills. Use on-chain DEX endpoints when wallet analytics depends on pool liquidity, pair activity, or token discovery before centralized listings appear.

Mix those roles carelessly and you create avoidable problems. A chart job starts competing with live refreshes. Contract mapping gets recomputed on every request. Thinly traded assets inherit stale prices from the wrong source. Good DeFi analytics starts with a boring rule: assign each endpoint a clear responsibility, cache by that responsibility, and keep your asset identity model stricter than your UI.

Authentication is simple. Capacity planning is not.

CoinGecko splits access into Demo and paid tiers, and this choice affects your architecture on day one. The Demo plan includes 10K call credits per month with attribution required, while paid tiers include Analyst at $129 per month with 500K call credits and up to 500 requests per minute, Lite at $499 per month, Pro at $999 per month, and Enterprise as custom, as listed on the CoinGecko API pricing page.

For high-frequency wallet tracking, the difference between 30 per minute on Demo and 500 per minute on paid plans changes what you can ship without aggressive throttling, as reflected in the Dart wrapper documentation for CoinGecko API plans and usage patterns.

Demo and Pro use different headers. Get this wrong and you burn time debugging the wrong thing.

Python with a Pro key

import requestsurl = "https://pro-api.coingecko.com/api/v3/simple/price"params = {"ids": "bitcoin,ethereum","vs_currencies": "usd","include_24hr_change": "true","include_market_cap": "true"}headers = {"x-cg-pro-api-key": "YOUR_PRO_KEY"}resp = requests.get(url, params=params, headers=headers, timeout=10)resp.raise_for_status()print(resp.json())JavaScript with fetch

const url = new URL("https://pro-api.coingecko.com/api/v3/simple/price");url.searchParams.set("ids", "bitcoin,ethereum");url.searchParams.set("vs_currencies", "usd");url.searchParams.set("include_24hr_change", "true");url.searchParams.set("include_market_cap", "true");const res = await fetch(url, {headers: {"x-cg-pro-api-key": process.env.COINGECKO_PRO_KEY}});if (!res.ok) {throw new Error(`CoinGecko error ${res.status}`);}const data = await res.json();console.log(data);Do not choose a plan based on “number of users.” Choose it based on query pattern.

| Plan | Best fit | Practical constraint |

|---|---|---|

| Demo | Local testing, endpoint exploration, simple prototypes | Throttles fast under polling-heavy workloads |

| Analyst | Early production analytics, charts, basic wallet tracking | You still need disciplined caching |

| Lite or Pro | Multi-view app with history, watchlists, and frequent refreshes | Better headroom, but poor batching still wastes credits |

| Enterprise | Broad on-chain analytics and custom workloads | Requires a serious usage model |

Three habits save pain early:

/key endpoint and surface usage in internal dashboards.If you are trying to model your own request envelope, this write-up on API rate limit planning for DeFi apps is a useful operational reference.

Key takeaway: Paid access is not about prestige. It is what lets you replace fragile polling logic with stable refresh schedules.

A wallet tracker does not need every endpoint. It needs a short list used with discipline.

The fastest way to get lost in coingecko api documentation is to jump between endpoints without deciding each one’s role. I prefer a compact model: one endpoint for quick prices, one for asset identity, one for contract resolution, one for richer market views, one for historical data, and one family for on-chain pool activity.

| Endpoint | Primary Use Case | Key Parameters |

|---|---|---|

/simple/price | Fast latest prices for known coin IDs | ids, vs_currencies, optional include flags |

/coins/list | Build your local asset index and map names to IDs | optional include platform-related fields depending on implementation |

/coins/{id} | Fetch canonical coin metadata and platform mappings | id path parameter |

/coins/{id}/contract/{contract_address} | Resolve a token contract to a known asset record | id, contract_address |

/coins/markets | Market data lists for ranking, filtering, and dashboards | vs_currency, ids, order, per_page, page |

/coins/{id}/market_chart | Historical price, market cap, and volume series | vs_currency, days |

/coins/{id}/history | Date-specific snapshots for backfills and audits | date |

/onchain/simple/networks/{network}/token_price/{addresses} | On-chain token pricing by network and token address | network, token addresses |

/onchain/networks/{network}/pools/{address} | Pool details for DEX tracking and liquidity-aware analysis | network, pool address |

/simple/price is for render speed. Use it when you already know the CoinGecko IDs and just need the latest values for cards, watchlists, or summary rows.

/coins/list is an indexing endpoint. Fetch it on a schedule, store it locally, and do lookups against your own database instead of calling it on every search.

/coins/{id} is your source of truth for metadata-heavy views. It is slower and heavier than the simple endpoints, which is exactly why you should not put it inside a hot refresh loop.

/coins/{id}/contract/{contract_address} is useful when a user or ingestion pipeline starts from an address. That happens constantly in DeFi.

A clean backend usually divides these jobs like this:

/simple/price/onchain/simple/.../token_price/.../coins/markets/coins/{id}/market_chart/coins/list/coins/{id}/coins/{id}/historyThis split keeps your UI fast and your indexing stable.

Use one thin wrapper with endpoint-aware caching instead of writing ad hoc calls everywhere.

import requestsfrom time import timeclass CoinGeckoClient:def __init__(self, api_key):self.base = "https://pro-api.coingecko.com/api/v3"self.headers = {"x-cg-pro-api-key": api_key}def get(self, path, params=None):url = f"{self.base}{path}"r = requests.get(url, headers=self.headers, params=params, timeout=10)r.raise_for_status()return r.json()client = CoinGeckoClient("YOUR_PRO_KEY")prices = client.get("/simple/price", {"ids": "bitcoin,ethereum","vs_currencies": "usd"})markets = client.get("/coins/markets", {"vs_currency": "usd","ids": "bitcoin,ethereum"})print(prices)print(markets)Tip: Do not let frontend code decide endpoint choice. Put endpoint selection in your backend so caching, retries, and fallback logic stay consistent.

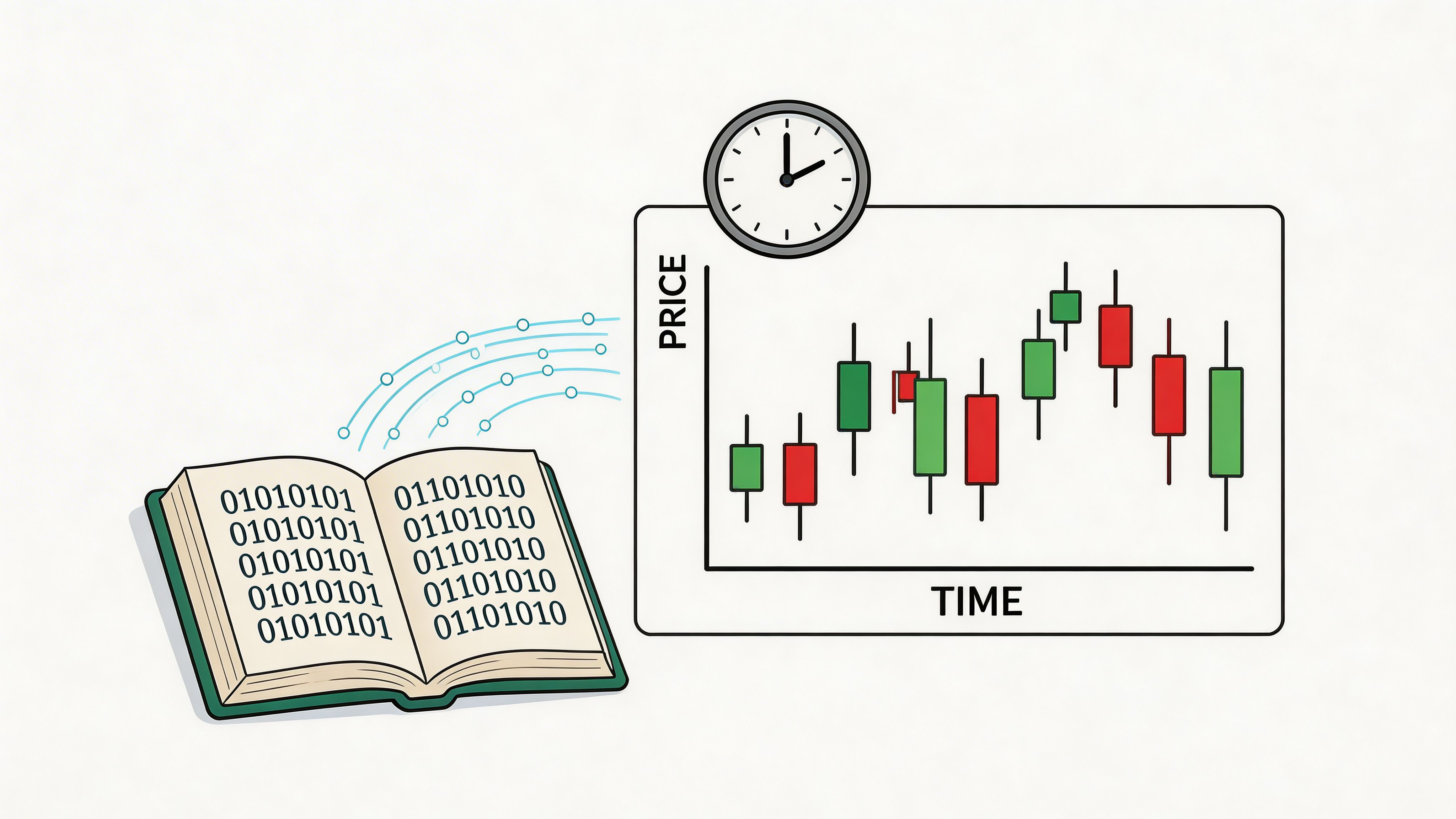

Historical data is where a wallet tracker stops being a balance checker and starts becoming an analytics product.

For CoinGecko, the workhorse endpoint is /coins/{id}/market_chart. It returns timestamped arrays for prices, market caps, and total volumes. CoinGecko states that it covers 18,000+ coins since 2014, with the last data point updated every 30 seconds, and shows example market_chart output such as prices: [1711929600000, 71246.95], market_caps up to 1401370211582.37, and total_volumes up to 30137418199.64 in the CoinGecko changelog documentation.

Use /market_chart when you need a time series for lines, overlays, drawdowns, or portfolio rollups.

Use /history when you need a specific day snapshot for auditing transactions or rebuilding a portfolio state at a past date.

Use OHLC when the UI or strategy needs candle structure rather than point-to-point series. For wallet products, line charts usually come first. Candles become important when users drill into token behavior around entries and exits.

import requestsimport pandas as pdAPI_KEY = "YOUR_PRO_KEY"coin_id = "bitcoin"url = f"https://pro-api.coingecko.com/api/v3/coins/{coin_id}/market_chart"params = {"vs_currency": "usd","days": "30"}headers = {"x-cg-pro-api-key": API_KEY}resp = requests.get(url, params=params, headers=headers, timeout=10)resp.raise_for_status()data = resp.json()prices_df = pd.DataFrame(data["prices"], columns=["timestamp", "price"])prices_df["timestamp"] = pd.to_datetime(prices_df["timestamp"], unit="ms")prices_df = prices_df.set_index("timestamp")market_caps_df = pd.DataFrame(data["market_caps"], columns=["timestamp", "market_cap"])market_caps_df["timestamp"] = pd.to_datetime(market_caps_df["timestamp"], unit="ms")market_caps_df = market_caps_df.set_index("timestamp")volumes_df = pd.DataFrame(data["total_volumes"], columns=["timestamp", "volume"])volumes_df["timestamp"] = pd.to_datetime(volumes_df["timestamp"], unit="ms")volumes_df = volumes_df.set_index("timestamp")merged = prices_df.join(market_caps_df).join(volumes_df)print(merged.tail())That structure is enough for most chart pages and enough for backtesting simple wallet-following ideas.

Keep the browser dumb. Parse and normalize series in your backend when possible.

async function getChartSeries(coinId, days = 30) {const url = new URL(`https://pro-api.coingecko.com/api/v3/coins/${coinId}/market_chart`);url.searchParams.set("vs_currency", "usd");url.searchParams.set("days", String(days));const res = await fetch(url, {headers: {"x-cg-pro-api-key": process.env.COINGECKO_PRO_KEY}});if (!res.ok) throw new Error(`CoinGecko error ${res.status}`);const json = await res.json();return json.prices.map(([ts, price]) => ({x: new Date(ts),y: price}));}For more examples on wiring price APIs into app features, this guide on crypto price API implementation patterns is worth reviewing.

A quick walkthrough is also useful before you wire the UI:

/simple/price for thatTip: Build standard chart windows such as 1D, 7D, 30D, and longer backfills on the server. Reusing normalized series saves more engineering time than it costs.

A wallet tracker breaks fast when it treats token symbols as identities. A user bridges USDC from Ethereum to Arbitrum, holds the native version on Polygon, then deposits a wrapped variant into a vault. If your pipeline keys on symbol = "USDC", you will merge unrelated assets in some cases and split the same economic exposure in others.

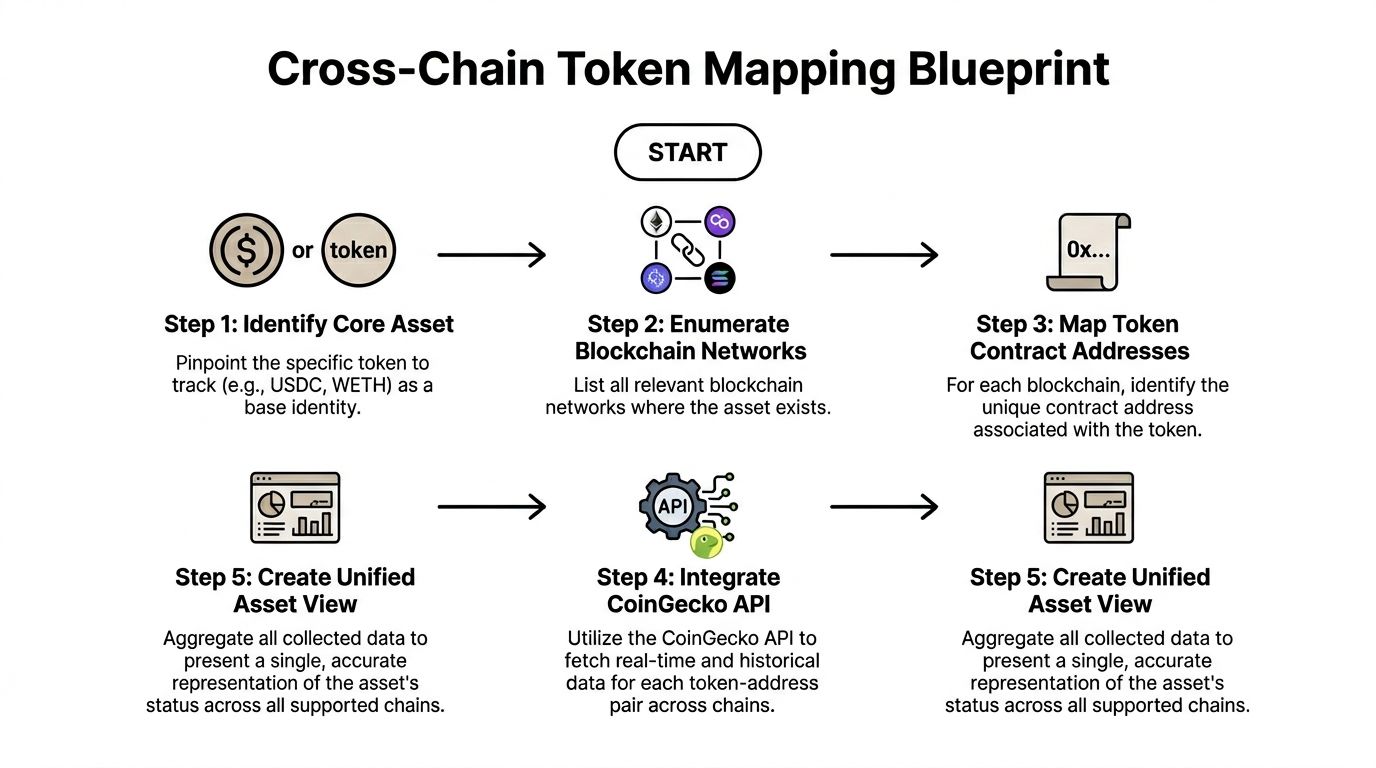

For high-frequency DeFi analytics, the mapping layer needs to answer one question quickly: given a (network, token_address) pair from an on-chain event, what canonical asset does it belong to, if any? CoinGecko gives you enough metadata to build that lookup, but the docs do not spell out the operational model. You need one.

Use a canonical asset record with chain-specific entries under it. In practice, that means your database stores CoinGecko's coin ID as the parent key, and stores one row per network contract beneath it.

A practical internal schema looks like this:

| Field | Purpose |

|---|---|

coin_gecko_id | Canonical asset identity |

symbol | UI label only |

platform_id | Network identifier |

contract_address | Chain-specific token identity |

decimals | Formatting and amount normalization |

is_native | Distinguish gas token vs contract token |

Add one more internal field if you care about speed: lookup_key = lower(platform_id) + ":" + lower(contract_address). That removes object juggling in hot paths and makes cache hits cheap.

Call /asset_platforms and map CoinGecko platform IDs to your chain registry. Do this once, store it locally, and never let raw chain labels from RPC providers flow straight into your asset mapper.

Chain naming drift causes more bugs than price math. polygon-pos, matic, and 137 may all refer to the same network in different parts of your stack.

Use /coins/{id} to read the platforms object. That object is the bridge between CoinGecko's coin ID and the contract addresses you see in wallet balances, transfers, and swap logs.

Do not fetch full coin payloads during every wallet sync. Pull them in a background job, then write the normalized mappings into your own store.

Your tracker will not search by coin ID first. It will usually start with a token address emitted by an event or returned by a balance API. Build a reverse index from (platform_id, contract_address) to coin_gecko_id, and keep it in Redis or another low-latency cache if refresh frequency is high.

This reverse index is the key product here.

New tokens, bridged wrappers, LP tokens, and vault receipts will regularly miss your first-pass mapping. Mark them as unresolved, save the raw network and contract data, and send them through an enrichment queue. Portfolio updates should continue without blocking on metadata.

def build_contract_index(client, coin_ids):contract_index = {}for coin_id in coin_ids:coin = client.get(f"/coins/{coin_id}", {"localization": "false"})platforms = coin.get("platforms", {}) or {}for platform_id, contract_address in platforms.items():if contract_address:contract_index[(platform_id.lower(), contract_address.lower())] = coin_idreturn contract_indexFor production, I would extend that pattern in three ways. First, persist the index instead of rebuilding from scratch. Second, track last_seen_at on each mapping so stale entries can be reviewed. Third, store unresolved contracts in a separate table so analysts can inspect repeated misses and decide whether they are wrappers, spam tokens, or assets CoinGecko has not linked yet.

USD Coin, USDC.e, and Bridged USDC may need different treatment depending on your product rules./coins/{id} inside the wallet refresh loop: that turns metadata into a latency bottleneck.One trade-off matters here. If you aggregate every chain representation under one canonical asset, portfolio totals look cleaner, but you lose some execution detail. If you keep each contract separate, analytics stay precise, but the UI gets noisy. The usual fix is to store both views. Keep contract-level holdings for attribution and PnL, then roll them up to the canonical asset in the presentation layer.

That approach stays boring, which is exactly what you want in a wallet tracker. Fast reads, deterministic mapping, and a clear unresolved path beat clever heuristics every time.

A wallet buys into a new pair at 09:14, adds size again six minutes later, and exits before your hourly price poll even runs. If your tracker only stores balances plus a reference price, that sequence disappears. Smart money analysis starts by treating on-chain activity as the primary record and market data as enrichment.

CoinGecko’s /onchain/* endpoints help with that, but the official guidance stays high level. The CoinGecko API best practices page explains authentication, caching, and rate-limit handling in general terms. It does not show how to build an event-first wallet pipeline, how to decide when a swap deserves pool hydration, or how to join pool-level context back to canonical asset pricing for PnL and alerting. Those are the parts that matter in a high-frequency DeFi tracker.

Start with two endpoint groups.

/onchain/simple/networks/{network}/token_price/{addresses}

Use this when your ingestion starts from raw transfer or swap events. You already have a contract address and a chain. Querying by those keys avoids symbol matching and cuts out a whole class of bad joins.

/onchain/networks/{network}/pools/{address}

Pool data gives the trade context your wallet tracker needs. The same token can trade in several pools with very different liquidity, routing behavior, and price quality. Without the pool layer, large buys in illiquid pairs look more important than they are.

For smart money work, a swap event should produce more than token_in, token_out, and usd_value.

Store at least:

wallet_addressnetworktx_hashblock_timestamppool_addresstoken_in_addresstoken_out_addressamount_in_rawamount_out_rawinitiatorcounterparty if your source provides itThen enrich in stages. First attach token prices. Next attach pool details for trades that pass your relevance rules, such as size threshold, known watched wallets, or first-entry behavior. Last, join your canonical asset mapping so portfolio valuation stays consistent with the rest of the app.

This pattern works well in production because it protects API budget and keeps latency predictable.

from dataclasses import dataclassfrom typing import Iterable, Dict, List, Optional@dataclassclass SwapEvent:network: strtx_hash: strblock_timestamp: intwallet_address: strpool_address: strtoken_in: strtoken_out: stramount_in_raw: stramount_out_raw: strdef normalize_address(addr: str) -> str:return addr.lower()def group_token_queries(events: Iterable[SwapEvent]) -> Dict[str, List[str]]:grouped = {}for e in events:network = e.networkgrouped.setdefault(network, set()).update([normalize_address(e.token_in),normalize_address(e.token_out),])return {k: sorted(v) for k, v in grouped.items()}def should_fetch_pool(event: SwapEvent, watched_wallets: set) -> bool:return event.wallet_address.lower() in watched_walletsdef enrich_events(events, cg_client, watched_wallets, canonical_map):token_queries = group_token_queries(events)token_prices = {}for network, addresses in token_queries.items():if not addresses:continuetoken_prices[network] = cg_client.get(f"/onchain/simple/networks/{network}/token_price/{','.join(addresses)}")pool_cache = {}enriched = []for event in events:network = event.networktoken_in = normalize_address(event.token_in)token_out = normalize_address(event.token_out)pool = normalize_address(event.pool_address)event_prices = {"token_in_price": token_prices.get(network, {}).get(token_in),"token_out_price": token_prices.get(network, {}).get(token_out),}pool_details = Noneif should_fetch_pool(event, watched_wallets):cache_key = f"{network}:{pool}"if cache_key not in pool_cache:pool_cache[cache_key] = cg_client.get(f"/onchain/networks/{network}/pools/{pool}")pool_details = pool_cache[cache_key]canonical_in = canonical_map.get((network, token_in))canonical_out = canonical_map.get((network, token_out))enriched.append({"event": event,"prices": event_prices,"pool": pool_details,"canonical_in": canonical_in,"canonical_out": canonical_out,})return enrichedThe important design choice is the order. Ingest the chain event first. Price it cheaply by network and contract. Fetch pool detail only after the event is worth the extra request. That keeps your queue focused on trades analysts care about.

A useful smart money signal is not “wallet bought token X.” It is “watched wallet accumulated token X through pool Y while liquidity was concentrated in that venue and before price discovery spread to deeper pools.”

That requires three separate views of the same event:

Execution view from the chain event itself

Which wallet traded, what contract moved, and through which pool.

Market context view from /onchain/networks/{network}/pools/{address}

Whether the pool is active enough to matter for your alerting rules.

Valuation view from your standard price path and canonical mapping

What the trade means for portfolio exposure, cost basis, and PnL across chains.

Keep those views separate in storage. Teams often collapse them into one “trade price” field, then later discover they cannot explain why a wallet alert fired or why portfolio value changed after a remap.

This is the fastest way to waste credits. Many swaps are routing noise, dust, or trades in pools that do not meet your product’s threshold for significance.

Pool context is useful for trade analysis. It is a poor default for account valuation if your app needs consistent cross-wallet PnL. Use your canonical pricing path for holdings. Attach pool data as event metadata.

If you only store the latest pool state, analysts cannot reconstruct why a trade looked important when it happened. Save the returned pool payload or the fields you depend on alongside the event.

A transfer into a wallet changes balances. A swap changes intent, cost basis, and market exposure. Your pipeline should classify them differently before enrichment.

One practical rule helps here. Use /onchain/* to explain execution and local market conditions. Use your canonical asset and historical price stack to calculate holdings, PnL, and portfolio-level analytics. That split keeps the tracker fast and keeps smart money signals interpretable.

Most API integrations do not fail because the provider is weak. They fail because the app asks for the wrong data too often.

That is especially true with coingecko api documentation because the docs make many things possible, but they do not force you into an efficient shape. You have to design that yourself.

Treat API data like a storage class problem.

/coins/list, asset platform data, and other mapping-heavy responses belong in a long-lived cache or database table. Refresh them on a schedule, not on demand.

Market lists, chart windows, and enriched token metadata should live in Redis or your app cache with endpoint-specific TTLs.

Fast prices and active wallet event enrichments need short refresh windows and strict batching.

The easiest win is /simple/price. It supports multiple coin IDs in one request, which means one batched call is better than many small ones when you render a portfolio or watchlist.

def get_prices(client, ids):return client.get("/simple/price", {"ids": ",".join(ids),"vs_currencies": "usd","include_market_cap": "true","include_24hr_change": "true"})If you pass around individual price requests inside loops, you are creating a scaling problem on purpose.

Endpoints like /coins/markets should always be paginated by explicit rules. Never “just fetch until done” in a request cycle serving users.

A better pattern:

CoinGecko exposes a /key endpoint for tracking rate limits and credits. Use it.

Log the remaining budget. Alert internally before you hit hard limits. If you do not instrument consumption, you will not know which feature is burning requests.

If your app grows, efficient API use becomes product quality, not backend polish.

Users experience inefficiency as:

A careful cache and batching strategy prevents those failures earlier than any retry loop will.

A good PnL tracker is a join problem. Transactions, token identity, current pricing, and historical snapshots all need to line up.

Assume you already have a user’s transaction history from your chain indexer. Each record includes network, token contract, amount, timestamp, and cost basis in your preferred quote currency.

Take each (network, contract_address) pair and look it up in your reverse contract index. If no mapping exists, send it to an enrichment queue and flag the holding as unresolved.

Aggregate transactions by canonical asset and chain-aware position rules. Your storage should separate a wallet’s token ledger from its display portfolio model.

Once you have the set of CoinGecko IDs, call /simple/price once for the active portfolio set.

coin_ids = sorted({pos["coin_gecko_id"] for pos in positions if pos.get("coin_gecko_id")})price_map = client.get("/simple/price", {"ids": ",".join(coin_ids),"vs_currencies": "usd"})portfolio_value = 0portfolio_cost = 0for pos in positions:coin_id = pos["coin_gecko_id"]amount = pos["net_amount"]cost_basis = pos["cost_basis_usd"]current_price = price_map.get(coin_id, {}).get("usd")if current_price is None:continuecurrent_value = amount * current_pricepos["current_value_usd"] = current_valuepos["pnl_usd"] = current_value - cost_basisportfolio_value += current_valueportfolio_cost += cost_basistotal_pnl = portfolio_value - portfolio_costIf you want a portfolio value-over-time view, pull /coins/{id}/market_chart for each major holding and combine the series against historical balances. That part is heavier, so precompute it in jobs rather than rebuilding it on every page load.

The best PnL trackers do three things well:

If you want a product-oriented view of how traders use these metrics, this guide on DeFi PnL tracking workflows gives useful context.

Tip: Users forgive delayed enrichment. They do not forgive silent mispricing.

Most CoinGecko errors are boring once you see the pattern. The fix is usually either identity, auth, or request pacing.

This usually means your parameters are malformed.

Common causes:

vs_currencyExample shape:

{"error": "bad_request"}Check your parameter builder first. Do not debug response parsing until the request is known-good.

This is usually an API key problem.

Common causes:

Example defensive pattern:

if resp.status_code in (401, 403):raise RuntimeError("Check CoinGecko API key, header name, and plan access")In practice, this often means the coin ID or path is wrong. It can also happen when you use the wrong network or address combination on on-chain routes.

{"error": "not_found"}Log the full request path and the exact normalized identifier you used.

This is the scaling error. Your app is sending requests faster than the current plan allows.

Use exponential backoff; fixing the caller is essential.

async function withBackoff(fn, retries = 4) {let delay = 500;for (let i = 0; i < retries; i++) {try {return await fn();} catch (err) {if (!String(err.message).includes("429")) throw err;await new Promise(r => setTimeout(r, delay));delay *= 2;}}throw new Error("Rate limit retries exhausted");}The durable fix is batching, caching, and endpoint budgeting. Retries only buy time.

If you want to turn these patterns into a working trading workflow instead of a pile of endpoints, Wallet Finder.ai is built for that job. It helps traders track smart money wallets across major ecosystems, study full trading histories, and act on wallet buys, swaps, and sells in real time without building the whole analytics stack from scratch.