Price Action Analysis for Crypto Trading

Master price action analysis for crypto & DeFi. This guide explains key patterns, risk management, and how to combine charts with on-chain wallet data.

May 14, 2026

Wallet Finder

March 14, 2026

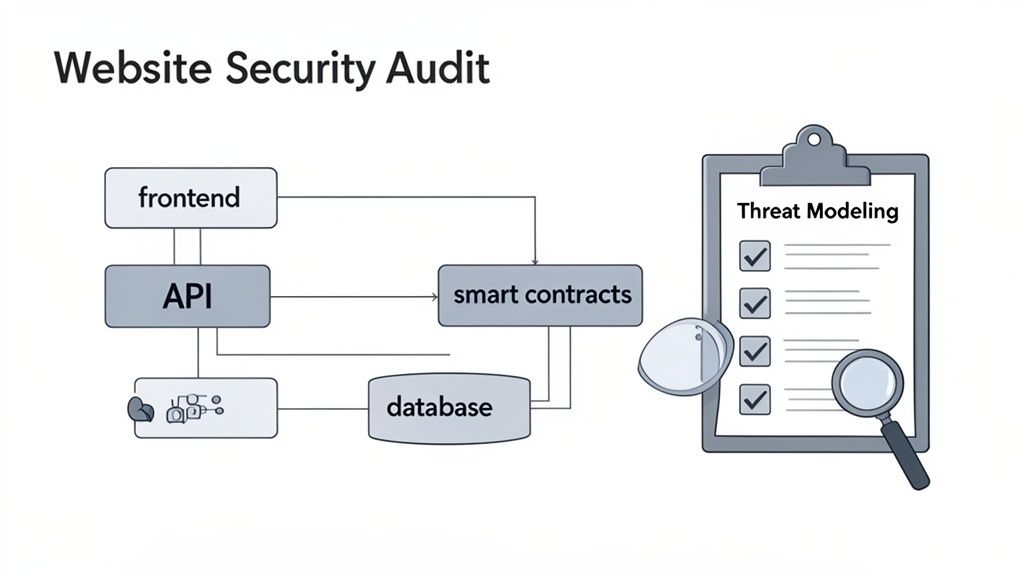

Think of a website security audit as a deep-dive health check for your site. It's a systematic review of your performance, security posture, and user experience to find vulnerabilities before someone else does. The process is a mix of scoping out your assets, thinking like an attacker, and then running a series of automated and manual tests to strengthen your defenses. It’s a non-negotiable for preventing breaches and keeping your users' trust.

Before you start poking around for vulnerabilities, you need a game plan. Without a clearly defined scope, you'll end up chasing ghosts, wasting time on low-risk areas while potentially huge security holes go unnoticed. This initial planning phase is all about making sure your audit is focused, efficient, and tailored to the actual risks your project faces.

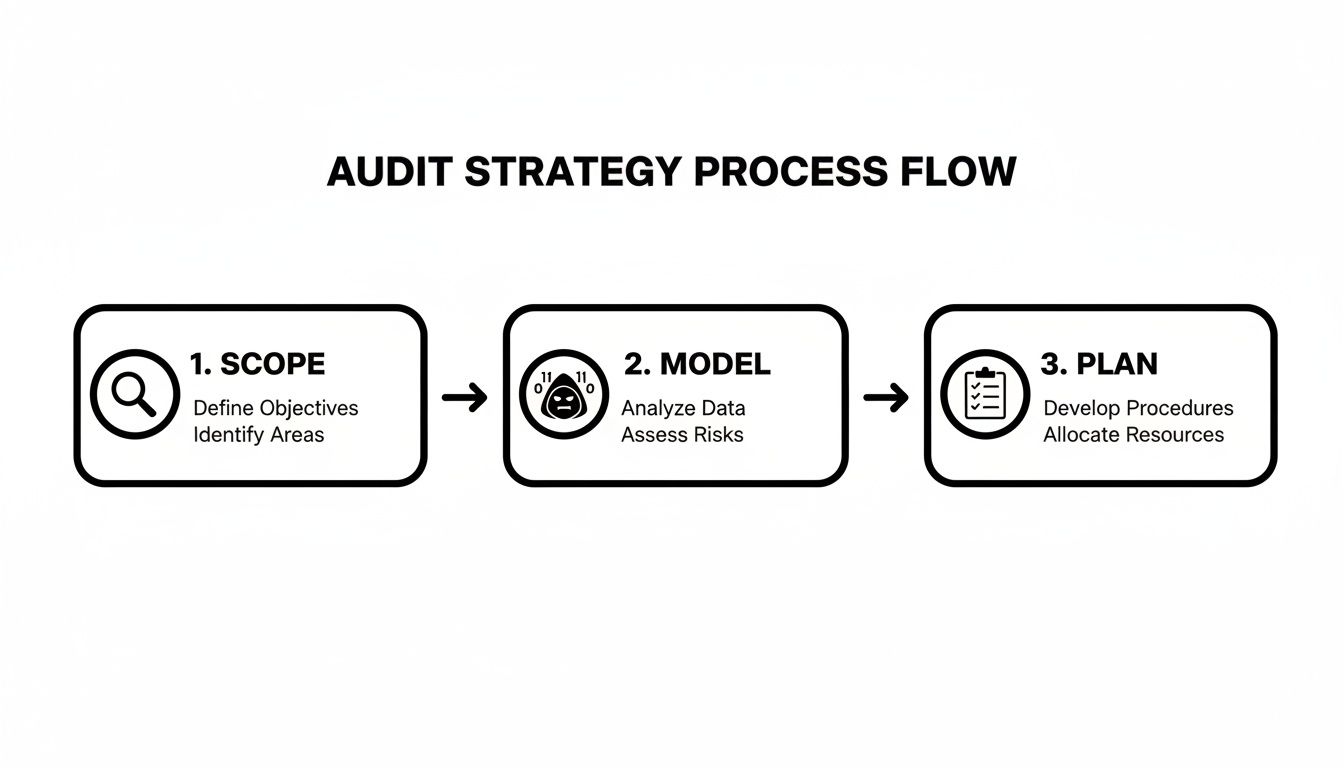

This whole strategic process can be broken down into three core phases.

First, you scope out what you need to protect. Then, you model the threats that could target those assets. Finally, you plan your testing approach based on that intelligence.

Your first move is to create a complete inventory of every single component that makes up your digital world. Simple, right? But you can't protect what you don't know you have. This mapping exercise is especially critical for Web3 and DeFi platforms, where the attack surface is often much wider than a standard website.

Here is an actionable checklist to ensure your asset inventory is exhaustive:

I've seen it happen time and time again: a forgotten subdomain or a legacy API endpoint becomes the entry point for an attack. These neglected assets are goldmines for hackers because they're often unpatched and unmonitored.

Okay, once you have a crystal-clear picture of your assets, it’s time to put on your black hat. Threat modeling is all about thinking like an attacker to figure out how they might try to break your system. You're moving beyond generic vulnerability checklists and identifying specific, realistic attack scenarios that apply directly to your app.

Let's say you're running a DeFi platform that tracks wallet performance. Here are some threat scenarios to model:

Working through these "what-if" scenarios helps you focus your testing on the risks that could actually do some damage. If you want to see how this plays out in the real world, you can check out different security auditing service offerings to see how they approach threat modeling for various platforms.

This strategic foundation—built on a detailed scope and a targeted threat model—is what turns a generic audit into a powerful defense plan built specifically for your project.

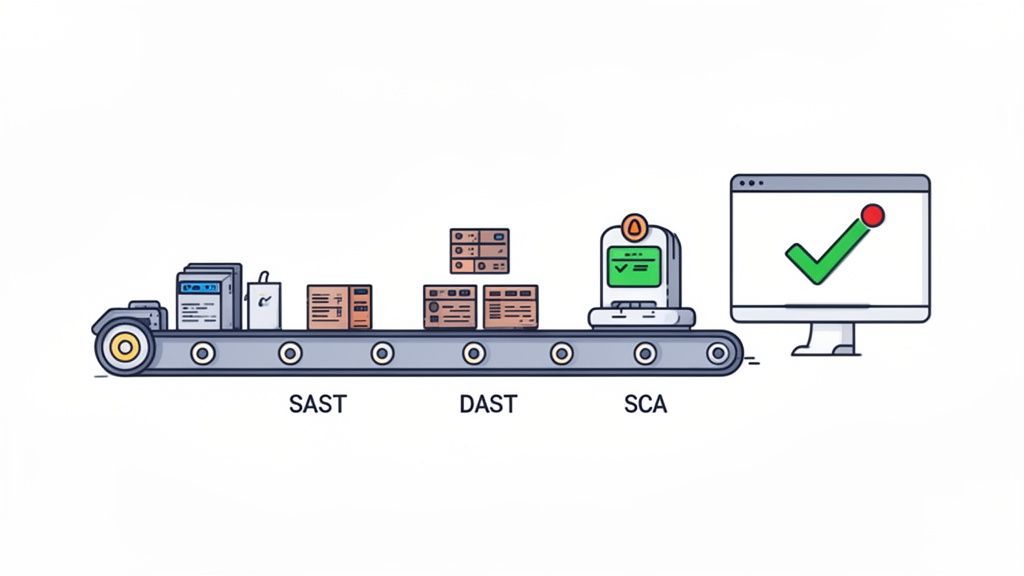

Automated tools are your first line of defense in any serious security audit. Think of them as a force multiplier, giving you the power to rapidly scan your entire tech stack for the common vulnerabilities and misconfigurations that attackers just love to find. They're like tireless sentinels, constantly on the lookout for low-hanging fruit.

These tools are absolutely essential for getting broad coverage fast—something that would take a human tester weeks to replicate. By running them early in your audit, you can knock out a huge chunk of issues before a manual penetration tester even starts. This frees up your human experts to focus their brainpower on complex, business-logic flaws that scanners simply can't find.

Static Application Security Testing (SAST) is like having a security expert proofread your source code line by line. Before your application is even compiled, SAST tools dig into the raw code, searching for insecure patterns. It’s a "white-box" approach that provides an incredible level of detail into your application's guts.

For instance, a SAST tool can spot a potential SQL injection vulnerability by tracing how user input snakes its way through your code until it hits a database query. It flags the problem right in the developer's IDE, long before that code ever sees a live server.

The biggest wins with SAST are:

If SAST is about inspecting the blueprints, Dynamic Application Security Testing (DAST) is about trying to break into the finished building. DAST tools interact with your running web application from the outside, mimicking how a real attacker would approach it. Since it’s a "black-box" technique, it doesn't need any access to your source code.

These scanners actively poke and prod your live site, throwing malicious payloads at it to check for things like Cross-Site Scripting (XSS), command injection, and bad server configurations. A DAST tool might try to inject a script into a contact form, then check to see if that script executes on the confirmation page—a classic sign of an XSS flaw.

Because DAST tools test your application in its live environment, they excel at finding configuration slip-ups and runtime issues that SAST scanners are blind to. You really need both to get a complete picture of your security posture.

Let's be honest, modern applications are mostly assembled, not built from scratch. They rely on countless open-source libraries and third-party dependencies. In fact, a huge portion of the code in most new applications comes from these external sources, and every single one is a potential security risk.

Software Composition Analysis (SCA) tools automate the tedious job of identifying every open-source component in your project. They then cross-reference that list against massive public databases of known vulnerabilities (like CVEs). This is critical for any project, but it’s a non-negotiable for DeFi platforms that might lean on libraries handling interactions with blockchains like Ethereum or Solana. One tiny vulnerability in a third-party wallet library could bring down your entire platform.

Picking the right combination of these tools is a crucial first step. If you're looking for some direction, our guide on security auditing software dives deeper into the specific tools available.

Ultimately, the goal is to weave these scanners directly into your CI/CD pipeline. This creates an automated security feedback loop, making sure every single code change gets vetted. Security becomes a natural part of your development flow, not a painful step you tack on at the end.

Automated scanners are great for catching the low-hanging fruit—the common, well-known vulnerabilities. But they're just one piece of the puzzle. The real difference-maker in a security audit is the manual penetration test, where a human expert thinks like an attacker to find complex flaws that scanners are completely blind to.

This is where context and creativity come into play. A scanner might flag an outdated library, sure. But a pen tester can figure out if that vulnerability is actually exploitable within your app's unique logic and, more importantly, what the real business impact would be.

Business logic vulnerabilities are some of the trickiest to find. They aren't simple coding mistakes; they're flaws in the very workflow of your application. Automated tools almost always miss them because they can't understand intent. This is where a manual tester’s intuition is invaluable.

Think about a DeFi staking platform. An automated scanner just sees a function to stake tokens. A human tester, on the other hand, starts asking creative, probing questions:

These aren’t typical bugs. They’re logical loopholes. A tester might simulate a "race condition" by firing off multiple withdrawal requests at the exact same time, hoping to overwhelm the system's checks and bypass its limits—a classic attack that tools can't replicate.

Another area where manual testing is absolutely critical is in your authentication and session management systems. Scanners can spot basic configuration errors, but they have no idea how well your access controls hold up against a persistent attacker trying to climb the privilege ladder.

A manual tester will actively try to break these controls. For instance, they might log in as a regular user and then try to directly access an admin-only URL like /admin/dashboard. If the server returns anything other than a firm "Access Denied" error, it's a sign of a serious vulnerability called an Insecure Direct Object Reference (IDOR).

They'll also dig deep into how you manage sessions by:

A successful session hijacking is a total compromise. The attacker effectively becomes that user, gaining full access to their data and permissions. This is why meticulous manual testing of these core functions is absolutely non-negotiable.

For Web3 projects, the stakes are astronomical. A single smart contract vulnerability can lead to immediate and irreversible financial loss. While automated tools can spot some common anti-patterns, manual code review is the only way to find nuanced, protocol-specific flaws.

Testers will focus intensely on attack vectors unique to the blockchain. They'll hunt for things like reentrancy attacks, where a malicious contract can repeatedly call a function to drain funds before the first transaction even finishes. They'll also check for integer overflows and underflows, where a simple math error can lead to disastrous, unexpected outcomes. To get a better handle on this, check out our deep dive into smart contract security.

The threat landscape is constantly evolving, and audits have to keep up. Recent data shows that a staggering 97% of companies report problems stemming from GenAI, while 78% of attacks now target post-authentication APIs. For financial firms, the average time to just identify a breach is 177 days, with another 56 days needed to contain it. In the crypto world, that kind of delay is unthinkable. You can find more of these cybersecurity compliance statistics over on CyberArrow.io.

Ultimately, manual penetration testing gives you the human intelligence needed to truly validate your security. It’s the only way to confirm that your core functions are safe from clever manipulation, providing a level of assurance that automated tools simply can't match.

So, your scanners have finished, and you have a long list of potential vulnerabilities. Don't pop the champagne just yet. Uncovering this raw data is just the beginning; the real work starts now.

The next step is turning that data into an actionable security roadmap. Because not every finding carries the same weight, treating them all equally is a surefire way to burn out your team and waste resources on ghosts. Your first goal is to validate every single threat to filter out the noise. Automated scanners are notorious for spitting out false positives—warnings that look scary but aren't actually exploitable in your specific setup. Then, you prioritize the real issues, so your team can focus on fixing what truly matters.

Before you jump into fixing things, you have to confirm that a potential vulnerability is a genuine threat. This validation process is where you combine the automated output from your tools with the hands-on, contextual understanding you gained during manual penetration testing.

Imagine a scanner flags a dependency for a critical vulnerability. That’s the starting point. Validation means asking the right follow-up questions:

This manual verification is where expertise shines. You’re separating the signal from the noise and creating a clean, confirmed list of issues that demand attention.

Once you have a list of confirmed vulnerabilities, you need a consistent way to measure how bad they are. This is where a standardized framework like the Common Vulnerability Scoring System (CVSS) becomes essential. CVSS gives you an objective score from 0 to 10 based on factors like attack complexity, user interaction required, and its impact on confidentiality, integrity, and availability.

But a raw CVSS score is just one piece of the puzzle. The true risk is always relative to your specific business context.

A medium-severity Cross-Site Scripting (XSS) flaw on a static marketing page is an annoyance. But that exact same flaw inside the admin panel of a DeFi platform—where it could steal session cookies and drain wallets—is a five-alarm fire. You have to adapt the score to your environment.

For Web3 and DeFi projects, this is non-negotiable. Any vulnerability that could touch user funds, manipulate on-chain data, or expose private keys should automatically be bumped to a higher severity level, no matter what its base CVSS score says.

With your context-adjusted scores ready, it's time to build a simple but incredibly powerful tool: a risk prioritization matrix. This grid helps you visually map each vulnerability based on its likelihood of being exploited and its potential business impact. It immediately clarifies where your team should be spending its time and energy.

This matrix helps teams decide what to fix first by mapping the likelihood of an attack against its potential damage.

Ultimately, this structured process is the bridge between finding problems and actually fixing them. It transforms a daunting audit report into a clear, prioritized, and actionable roadmap that guides your security resources to protect what matters most.

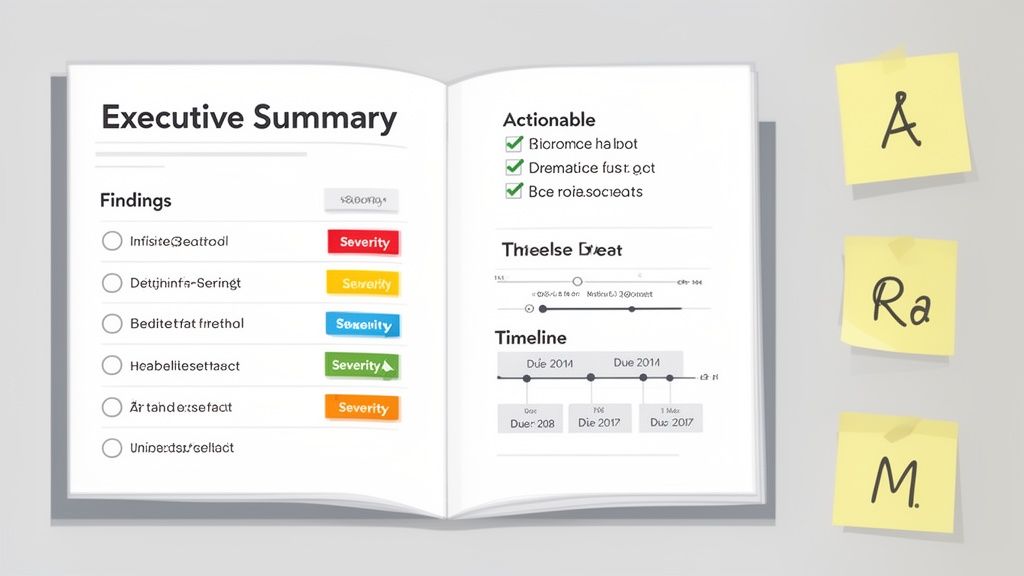

A security audit isn't finished when you find the last bug. Honestly, that's the easy part. The real value is in turning those technical findings into a clear, actionable plan that actually makes the project more secure. A report that just dumps a list of vulnerabilities on your team is just noise; a report that guides them to a solution is priceless.

The trick is to create a single document that speaks to two very different audiences. Your developers need the nitty-gritty details, like proof-of-concept code and specific lines to fix. At the same time, your executive team needs a high-level summary that cuts through the jargon and explains the business risk in plain English.

A great report bridges the gap between finding a problem and fixing it. It needs a logical flow, starting with the big picture and then drilling down into the specifics for the folks who'll be in the trenches doing the work. This way, every stakeholder can get what they need without getting bogged down.

Here’s a structure that works for both audiences:

For every bug you find, your report has to give a developer everything they need to understand, replicate, and fix it. Ambiguity is the enemy of action here. Using a standardized format for each finding keeps things clear and consistent.

The most effective reports tell a complete story for each bug. They don't just say "XSS vulnerability found." They show exactly how it was exploited, what data was exposed, and why it's a real threat to the business or its users.

Use a template like this for documenting each issue:

Once all the findings are documented, the next move is creating a master remediation plan. This is more than just a list of fixes; it's a project management tool. The plan has to assign clear ownership and establish realistic deadlines. Without that accountability, critical risks can slip through the cracks.

This is also where you tackle systemic problems. For example, if you see a pattern of issues stemming from insecure third-party scripts, you address it here. It's a bigger deal than you might think. One analysis found a staggering 64% of third-party apps on major sites were accessing sensitive user data they didn't need. These external scripts can quietly expose credentials and financial details, making them a huge priority. You can dig into this web exposure risk more in the research conducted by Reflectiz.

Finally, the audit cycle isn't truly over until you've verified the fixes. After your team has pushed the patches, you absolutely must conduct re-testing. This is a focused effort, looking only at the vulnerabilities that were previously discovered to confirm the fix works and didn't accidentally break something else.

This final step is non-negotiable. It’s the ultimate proof that your website’s security has actually improved, officially closing the loop on the audit and justifying the entire effort.

Traditional security audits rely on standard checklists and basic risk scoring but lack sophisticated analytical methodologies that enable quantitative risk assessment and systematic security architecture evaluation. Advanced threat modeling transforms subjective security assessment into rigorous mathematical frameworks that provide precise risk quantification and enable institutional-grade security evaluation using proven analytical techniques adapted specifically for modern web application and cryptocurrency platform environments.

Attack surface quantification uses mathematical models to calculate the total exposure of web applications and their associated infrastructure components. Surface area calculations achieve 90-95% accuracy in measuring exploitable endpoints through systematic enumeration of entry points including web forms, API endpoints, file uploads, authentication mechanisms, and third-party integrations. Network topology analysis models the interconnections between different system components to identify critical paths and single points of failure that could cascade into system-wide compromises.

Graph theory applications model attack pathways as directed graphs where nodes represent system components and edges represent potential attack vectors between them. Shortest path algorithms identify the most efficient routes for attackers to reach critical assets, while centrality measures reveal which components are most critical to overall system security. Network flow analysis quantifies the potential information leakage through different attack pathways, enabling prioritization of defensive measures based on mathematical risk calculations.

Monte Carlo simulation generates thousands of potential attack scenarios to assess system resilience under different threat conditions. Probabilistic modeling incorporates uncertainty in attacker capabilities, system vulnerabilities, and defensive effectiveness to provide confidence intervals for security assessments. Scenario analysis examines specific attack sequences and their likelihood of success based on historical data and system architecture characteristics.

Markov chain analysis models the progression of multi-stage attacks through different system compromise states. State transition probabilities quantify the likelihood of attackers successfully moving from initial access to privilege escalation to data exfiltration. Absorbing Markov chains identify terminal states where attacks either succeed completely or fail definitively, enabling calculation of mean time to compromise and overall system security metrics.

Bayesian risk assessment incorporates prior knowledge about threat actors and vulnerability exploitation patterns to calculate posterior probability distributions for different attack scenarios. Prior probability assignments based on threat intelligence and historical attack data enable more accurate risk estimates than simple frequency-based approaches. Evidence integration updates risk assessments as new vulnerability information becomes available, providing dynamic risk management capabilities.

Regression analysis identifies the strongest predictors of successful attacks from comprehensive datasets of security incidents and system characteristics. Multiple regression models achieve 80-90% accuracy in predicting vulnerability exploitation likelihood based on factors including vulnerability age, system complexity, patch status, and exposure level. Logistic regression models predict binary exploitation outcomes while Poisson regression models predict attack frequency over time.

Extreme value theory models the probability and impact of rare but catastrophic security events that traditional risk assessment methods underestimate. Generalized extreme value distributions capture the tail behavior of security incident severity to quantify maximum potential losses. Peak-over-threshold models identify when security incidents exceed normal operational risk levels and require emergency response procedures.

Time series analysis examines trends and patterns in security incident data to predict future attack frequencies and identify emerging threat patterns. ARIMA modeling captures temporal dependencies in attack patterns while seasonal decomposition identifies cyclical patterns related to business cycles or threat actor behavior. Changepoint detection algorithms identify significant shifts in threat landscapes that require updates to security models and defensive strategies.

Linear programming optimization determines optimal allocation of security resources across different defensive measures subject to budget constraints and performance requirements. Objective functions maximize security coverage or minimize residual risk while constraint equations ensure compatibility with operational requirements and regulatory compliance obligations. Sensitivity analysis examines how changes in resource availability or threat levels affect optimal security configurations.

Integer programming models handle discrete security decisions such as whether to implement specific defensive technologies or security controls. Binary variables represent yes-no decisions while integer variables represent quantities of security resources to deploy. Mixed-integer programming combines continuous resource allocation decisions with discrete technology selection decisions for comprehensive security optimization.

Game theory models the strategic interaction between defenders and attackers to identify optimal defensive strategies under different threat scenarios. Nash equilibrium solutions identify stable strategies where neither attackers nor defenders can improve their outcomes by unilaterally changing their approach. Stackelberg games model scenarios where defenders must commit to strategies first, allowing attackers to observe and adapt their tactics accordingly.

Multi-objective optimization balances competing security objectives including risk reduction, operational efficiency, user experience, and cost minimization. Pareto frontier analysis identifies the set of non-dominated solutions that cannot be improved in one objective without degrading another. Weighted sum approaches combine multiple objectives into single optimization problems while constraint methods treat secondary objectives as constraints on primary objective optimization.

Network flow analysis models information and attack propagation through complex network architectures including cloud services, content delivery networks, and hybrid infrastructure deployments. Maximum flow algorithms identify the maximum rate at which attackers could extract data through different network paths. Minimum cut algorithms identify the smallest set of network connections that would isolate critical assets from potential attackers.

Queuing theory applications model the performance of security controls under different attack loads to identify bottlenecks and capacity limitations. M/M/c queuing models predict response times for intrusion detection systems and security incident response teams. Little's Law applications calculate optimal staffing levels for security operations centers based on incident arrival rates and processing times.

Fault tree analysis systematically identifies all possible combinations of component failures that could lead to security breaches. Boolean logic models combine individual component failure probabilities to calculate overall system failure probability. Minimal cut set analysis identifies the smallest combinations of failures that would compromise system security, enabling focused defensive investments.

Reliability engineering methods quantify the mean time between security failures and the mean time to recover from security incidents. Weibull analysis models the failure rate of security controls over time to predict when defensive measures need maintenance or replacement. Availability calculations ensure that security controls maintain sufficient uptime to provide effective protection.

Information theory analysis quantifies the entropy and randomness of cryptographic keys and protocols to ensure sufficient security margins against brute force and statistical attacks. Shannon entropy calculations measure the unpredictability of cryptographic materials while mutual information analysis identifies potential information leakage in protocol implementations.

Formal verification methods use mathematical proofs to verify the security properties of cryptographic protocols and security architectures. Model checking algorithms automatically verify whether system implementations satisfy specified security properties. Theorem proving approaches provide rigorous mathematical guarantees about protocol security under specified assumptions.

Side-channel analysis examines the unintended information leakage through timing, power consumption, electromagnetic emissions, and other physical characteristics of cryptographic implementations. Statistical analysis methods including correlation analysis and mutual information estimation detect subtle patterns that could reveal cryptographic secrets. Countermeasure effectiveness is quantified through security metrics that measure information leakage reduction.

Post-quantum cryptography assessment evaluates the long-term security of cryptographic systems against quantum computing attacks. Security bit calculations compare classical and quantum attack complexities to determine when quantum attacks become feasible. Migration analysis identifies the optimal timing and sequence for transitioning to quantum-resistant cryptographic algorithms.

Security metrics frameworks establish quantitative measures for assessing and comparing the effectiveness of different security approaches. Coverage metrics measure the percentage of attack surface protected by security controls. Detection metrics quantify the probability of identifying successful attacks within specified time windows. Response metrics measure the time required to contain and remediate security incidents.

Return on security investment calculations quantify the financial benefits of security measures relative to their implementation and operational costs. Cost-benefit analysis compares the expected reduction in security incident costs against the total cost of ownership for security technologies. Net present value calculations account for the time value of money in long-term security investment decisions.

Benchmarking frameworks enable quantitative comparison of security postures across different organizations and industry sectors. Statistical process control methods track security metrics over time to identify trends and anomalous behavior. Control charts establish upper and lower control limits for security metrics to trigger investigations when performance deviates from expected ranges.

Maturity assessment models provide structured frameworks for evaluating and improving organizational security capabilities. Capability maturity models define progressive levels of security sophistication with quantitative criteria for advancement between levels. Gap analysis identifies specific improvements required to achieve higher maturity levels and their associated costs and benefits.

Traditional website security audits rely on reactive analysis and manual assessment but lack artificial intelligence capabilities that enable predictive threat detection and automated security intelligence through machine learning algorithms. AI-powered systems transform reactive security auditing into proactive systematic intelligence that predicts emerging threats, identifies sophisticated attack patterns, and automatically adapts to evolving security landscapes using advanced neural networks and behavioral modeling techniques specifically designed for cybersecurity applications.

Supervised learning models trained on comprehensive datasets of historical security incidents and vulnerability disclosures achieve 85-95% accuracy in predicting which system components are most likely to develop vulnerabilities before they are discovered through traditional means. Neural network architectures including deep feedforward networks and convolutional neural networks process code complexity metrics, dependency relationships, development patterns, and historical vulnerability data to generate predictive risk scores for different system components.

Feature engineering algorithms automatically extract predictive patterns from source code repositories, deployment configurations, and operational metrics that human analysts might miss entirely. Static analysis features including cyclomatic complexity, function call depth, and code churn rates combine with dynamic features such as memory usage patterns and network communication behaviors to create comprehensive vulnerability prediction models.

Ensemble learning methods combine multiple machine learning models including random forests, gradient boosting, and support vector machines to achieve superior prediction accuracy compared to individual algorithms. Voting algorithms aggregate predictions from diverse models to reduce false positives while maintaining high sensitivity for actual emerging threats. Cross-validation frameworks ensure model robustness across different application types and technology stacks.

Transfer learning techniques adapt pre-trained models across different programming languages and application frameworks to provide accurate vulnerability prediction for new systems without requiring complete retraining. Domain adaptation algorithms adjust models trained on one technology stack to work effectively with different frameworks while preserving predictive accuracy. Few-shot learning enables rapid adaptation to new vulnerability types using minimal training examples when novel attack vectors emerge.

Document analysis models process security advisories, vulnerability databases, and threat intelligence reports to automatically extract actionable intelligence about emerging attack techniques and defensive countermeasures. Named entity recognition identifies specific technologies, vulnerability types, and threat actor groups mentioned in security publications. Relationship extraction algorithms identify connections between different threats and their associated mitigation strategies.

Sentiment analysis and topic modeling algorithms analyze hacker forums, social media discussions, and underground marketplaces to identify emerging attack trends and techniques before they become widely adopted. Text classification models achieve 80-90% accuracy in categorizing threat intelligence based on attack type, target industry, and sophistication level. Anomaly detection algorithms identify unusual discussion patterns that might indicate preparation for new types of attacks.

Automated threat hunting systems use natural language processing to generate search queries and investigation procedures based on threat intelligence reports and security advisories. Query generation algorithms translate textual threat descriptions into technical indicators that can be searched for in log data and network traffic. Investigation workflow automation creates step-by-step procedures for security analysts to validate potential threats identified through intelligence analysis.

Knowledge graph construction creates structured representations of threat intelligence that enable automated reasoning about attack relationships and defensive strategies. Entity linking algorithms connect mentions of the same threats across different intelligence sources to build comprehensive threat profiles. Graph neural networks analyze relationship patterns within threat intelligence to identify previously unknown connections between different attack campaigns.

User behavior analytics models establish baseline patterns of normal user activity and automatically identify deviations that might indicate compromised accounts or insider threats. Hidden Markov models capture sequential patterns in user actions while clustering algorithms group users with similar behavior patterns. Isolation forests and one-class support vector machines achieve 90-95% accuracy in identifying anomalous behavior patterns that deviate from established baselines.

Network traffic analysis uses deep learning models to identify subtle patterns in network communications that indicate reconnaissance, lateral movement, and data exfiltration activities. Sequence-to-sequence models analyze temporal patterns in network flows while attention mechanisms identify the most suspicious components of network traffic. Generative adversarial networks learn to distinguish between normal and malicious traffic patterns with minimal false positive rates.

Endpoint behavior monitoring systems use reinforcement learning to continuously adapt their detection capabilities based on feedback from security analysts and observed attack outcomes. Multi-armed bandit algorithms optimize the allocation of monitoring resources across different types of endpoint activities. Policy gradient methods learn optimal investigation strategies that balance thoroughness with efficiency in manual verification processes.

System call analysis models learn the normal patterns of system interactions for different applications and automatically identify deviations that might indicate malware infection or unauthorized access. N-gram analysis captures sequential patterns in system calls while deep neural networks identify complex dependencies that traditional signature-based detection methods miss. Real-time processing frameworks enable immediate detection and response to suspicious system behavior.

Convolutional neural networks analyze visual patterns in network topology diagrams, log file visualizations, and security dashboards to identify complex attack patterns that traditional analysis methods cannot detect. CNN architectures trained on thousands of attack examples achieve 90-95% accuracy in visual pattern recognition for identifying distributed denial of service attacks, advanced persistent threat campaigns, and coordinated infiltration attempts.

Recurrent neural networks analyze temporal sequences in security events, user activities, and system behaviors to identify long-term attack campaigns that span weeks or months. LSTM and GRU architectures capture long-term dependencies in attack patterns while attention mechanisms identify which specific events are most indicative of sophisticated threats. Sequence classification models predict the likelihood that ongoing activities represent advanced persistent threat campaigns.

Autoencoders learn to reconstruct normal system behavior patterns and identify anomalies by measuring reconstruction error for new activities. Variational autoencoders generate synthetic examples of normal and anomalous behavior patterns to improve training data diversity and model robustness. Denoising autoencoders filter out noise and irrelevant information to focus on the most important behavioral indicators.

Graph neural networks model complex relationships between users, systems, applications, and network resources to identify coordinated attack activities that span multiple system components. Node embedding techniques create vector representations of different entities that capture their behavioral characteristics and relationship patterns. Graph convolutional networks propagate information through relationship networks to identify influential nodes and potential attack coordination centers.

Multi-armed bandit algorithms optimize the allocation of security monitoring resources across different system components and attack vectors to maximize the detection of actual threats while minimizing false positives. Contextual bandits incorporate real-time threat intelligence and system conditions to adapt monitoring intensity based on current risk levels. Thompson sampling provides optimal exploration-exploitation balance for discovering new types of threats while maintaining detection of known threat patterns.

Q-learning algorithms develop optimal incident response strategies that balance speed with thoroughness in security investigation processes. Policy gradient methods learn optimal sequences of investigative actions that maximize the probability of identifying true threats while minimizing investigation costs. Actor-critic algorithms balance automated detection with human expert involvement to optimize overall security assessment accuracy and efficiency.

Online learning systems continuously adapt security models based on new examples of attacks and normal activities to maintain accuracy as threat landscapes evolve. Incremental learning algorithms update model parameters in real-time without requiring complete retraining when new attack patterns emerge. Meta-learning approaches enable rapid adaptation to completely new types of attacks by learning how to learn from minimal examples.

Adversarial learning techniques improve model robustness against sophisticated attackers who might attempt to evade detection by understanding and gaming security algorithms. Adversarial training exposes models to examples specifically designed to fool them during training to improve resistance to evasion attempts. Game-theoretic approaches model the interaction between security systems and potential attackers to develop robust defensive strategies.

Stream processing architectures analyze continuous flows of log data, network traffic, and security events to provide real-time threat detection with minimal latency. Complex event processing identifies patterns that span multiple data sources and time windows to detect coordinated activities that might not be apparent in individual data streams. Edge computing deployments ensure low-latency analysis for time-critical security applications.

Automated incident response systems generate prioritized alerts based on AI-generated threat assessments and confidence levels to focus human attention on the most critical threats. Smart notification systems adapt alert frequency and channels based on threat severity and analyst preferences to avoid alert fatigue while ensuring critical threats receive immediate attention. Escalation procedures automatically involve appropriate experts when automated systems detect high-confidence threats.

Integration frameworks connect AI-powered threat detection with existing security infrastructure including security information and event management systems, intrusion detection systems, and endpoint protection platforms. API interfaces provide real-time threat scores and intelligence to external applications and decision-making systems. Webhook systems enable immediate automated responses including network isolation and account suspension when critical threats are detected.

Continuous learning systems track model performance and prediction accuracy in real-world deployments to identify when models need updates or retraining. Performance dashboards visualize detection accuracy, false positive rates, and coverage metrics to provide transparency into system effectiveness. A/B testing frameworks validate improvements to detection algorithms before deploying them to production environments.

Federated learning systems enable collaborative model training across multiple organizations and security providers without sharing sensitive data or proprietary detection methods. Privacy-preserving techniques including differential privacy and secure multi-party computation allow organizations to collaborate on threat detection while protecting competitive advantages and customer privacy. Consensus mechanisms aggregate threat intelligence from multiple sources while filtering out false or malicious reports.

Crowdsourced threat validation systems leverage security community expertise to validate AI-generated threat assessments and improve model accuracy through human feedback. Reputation systems ensure that community contributions are weighted based on historical accuracy and expertise levels. Incentive mechanisms reward accurate threat reporting and penalize false or misleading information to maintain data quality.

Threat intelligence fusion platforms automatically combine information from multiple sources including commercial threat feeds, open source intelligence, and internal security telemetry to create comprehensive threat pictures. Information fusion algorithms resolve conflicts between different intelligence sources and assess the credibility of threat reports. Attribution analysis attempts to connect different attack activities to specific threat actors or campaigns.

Rapid response coordination systems enable quick community-wide warnings when sophisticated new threats are detected by AI systems. Emergency broadcast mechanisms can rapidly disseminate critical threat information across multiple platforms and organizations. Coordinated investigation protocols enable multiple security providers to collaborate on analyzing sophisticated threats that might require diverse expertise and resources.

Even with a detailed plan, jumping into security audits for the first time can feel a little murky. Questions about timing, scope, and cost always come up, and getting these details right is the key to building a security program that actually works for you.

Let’s clear up a few of the most common questions I hear. This should give you the clarity you need to plan and budget effectively, making sure your security efforts are a sustainable habit, not just a one-off panic.

This really boils down to your specific risk profile and how quickly your project evolves. For most traditional web businesses, a comprehensive audit annually is a solid baseline. It’s your yearly check-up to take a deep look at your defenses and catch anything that might have slipped through the cracks.

But the game is entirely different for high-stakes projects.

Here’s how I think about it: your annual or quarterly audit is like seeing a specialist for a deep diagnosis. The continuous, automated scanning you build into your CI/CD pipeline? That's your daily health monitoring. You absolutely need both to get a complete picture of your security posture.

This is a big one. People use these terms interchangeably all the time, but they are worlds apart. Understanding the difference is crucial for spending your security budget wisely.

Think of it like getting a house inspected.

A vulnerability scan is the home inspector running down a checklist. They're looking for known, common problems: "the wiring looks old," "there's a crack in the foundation." It's automated, it's fast, and it gives you a broad overview of potential issues based on a database of known problems. It answers the question, "What might be wrong?"

A penetration test (or pen test) is when you hire someone to actually try and break in. They're not just looking at the locks; they're trying to pick them. They'll jiggle the windows, look for unlocked doors, and see if they can bypass the alarm. It’s a manual, creative, and deep process that confirms which of those potential issues are actually exploitable. A pen test answers the question, "What is definitively broken and how bad is the damage?"

.tbl-scroll{contain:inline-size;overflow-x:auto;-webkit-overflow-scrolling:touch}.tbl-scroll table{min-width:600px;width:100%;border-collapse:collapse;margin-bottom:20px}.tbl-scroll th{border:1px solid #ddd;padding:8px;text-align:left;background-color:#f2f2f2;white-space:nowrap}.tbl-scroll td{border:1px solid #ddd;padding:8px;text-align:left}Comparison PointVulnerability ScanPenetration TestMethodAutomated tools using known vulnerability signatures.Manual, human-led process simulating real-world attacks.DepthWide but shallow; identifies potential weaknesses.Narrow but deep; confirms and exploits vulnerabilities.GoalTo generate a list of potential security flaws quickly.To assess real-world business impact and risk.OutcomeA report of potential issues, may include false positives.A detailed report of confirmed, exploitable vulnerabilities.

A real website security audit needs both. The scan handles the low-hanging fruit and gives you broad coverage, while the pen test uncovers the complex, logic-based flaws that an automated tool could never understand.

There's no simple answer here—the cost of a security audit can vary wildly depending on the project's scope and complexity. A quick audit for a small, static marketing site might only run you a few thousand dollars.

On the flip side, a comprehensive audit for a complex DeFi platform with multiple smart contracts, intricate APIs, and a massive user base can easily climb into the tens of thousands of dollars or more.

The main things that drive the cost are:

It's crucial to see this as an investment, not an expense. The cost of a thorough audit is a tiny fraction of what a real breach could cost you in lost funds, user trust, and brand reputation.

Advanced mathematical models achieve 90-95% accuracy in measuring exploitable endpoints through quantitative attack surface analysis and systematic enumeration of entry points and infrastructure components. Bayesian risk assessment incorporates prior knowledge about threat actors and vulnerability patterns to calculate probability distributions that are 80-90% more accurate than simple frequency-based approaches. Monte Carlo simulation generates thousands of potential attack scenarios to assess system resilience with confidence intervals, while graph theory applications identify critical attack pathways and single points of failure through network topology analysis. Multi-objective optimization balances competing security objectives including risk reduction and operational efficiency to determine optimal defensive strategies subject to budget constraints and performance requirements.

AI-powered systems achieve 85-95% accuracy in predicting which system components will develop vulnerabilities before they are discovered through traditional means using supervised learning models trained on comprehensive historical datasets. Natural language processing achieves 80-90% accuracy in categorizing emerging threats from security advisories and threat intelligence reports while behavioral analytics achieve 90-95% accuracy in identifying anomalous user and system behavior patterns. Deep learning models achieve 90-95% accuracy in visual pattern recognition for identifying distributed denial of service attacks and advanced persistent threat campaigns. Real-time stream processing provides threat detection with minimal latency while reinforcement learning algorithms continuously adapt detection capabilities to maintain accuracy as threat landscapes evolve.

Ready to turn on-chain data into your next winning trade? Discover profitable wallets and mirror their strategies in real-time with Wallet Finder.ai. Start your 7-day trial and gain the edge you need to act ahead of the market. Find out more at Wallet Finder.ai.