Bullish Abandoned Baby: Candlestick Pattern Mastery

Master the powerful bullish abandoned baby pattern. Learn identification, backtested reliability, and trading rules with on-chain examples.

April 12, 2026

Wallet Finder

April 12, 2026

Most traders don’t need more alerts. They need fewer false positives, faster context, and a way to spot intent before the move becomes obvious.

Swarm node ai becomes interesting here. Not because it’s magical, and not because every “AI agent” pitch in crypto deserves attention. It matters because on-chain trading has become too fragmented for one dashboard, one analyst, or one model to keep up with in real time.

A good trader already watches wallet flows, liquidity changes, token launches, bridge activity, and narrative rotation. The problem is coordination. One signal means nothing without the others. By the time you connect them manually, the trade is crowded.

Swarm systems try to solve that by turning research into a team sport. One agent watches new contracts. Another checks wallet relationships. Another scores behavior that looks like rotation, distribution, or insider accumulation. A fourth writes the summary and flags what deserves action. In theory, that’s the jump from reactive tracking to active discovery.

In practice, it’s powerful, expensive in the wrong setup, and still unreliable in places that matter most.

You're already running the standard workflow. Telegram alerts. A few wallet watchlists. DEX activity on one screen, charting on another, and a notebook full of half-finished theses on why a wallet bought one low-cap token before the rest of the cluster moved.

That setup works until it doesn’t. The wall most traders hit isn’t lack of data. It’s lack of synthesis.

The trader who wins early usually isn’t reading more dashboards. They’re combining signals faster. They see a deployer wallet fund a fresh address. They notice related wallets touch the same ecosystem. They catch liquidity placement before the social posts start. Then they act while everyone else is still trying to check on-chain activity.

Classic analytics stacks are good at showing activity. They’re weaker at coordinating interpretation.

A single rules-based alert can tell you:

It usually can’t tell you whether those events belong together, whether they fit a known wallet pattern, or whether the setup looks more like accumulation than noise.

That’s the promise behind swarm node ai. Instead of one model trying to do everything, you split the work across specialized agents. The result isn’t just another alert feed. It’s a research layer that can keep context alive across many moving parts.

Practical rule: If your workflow still depends on you manually cross-checking every alert against wallets, pools, and transaction history, you don’t have an intelligence system. You have an inbox.

The attraction isn’t automation by itself. Plenty of automated systems just create automated garbage.

What matters is whether the swarm can produce a tradable output such as:

That’s where swarm node ai starts to move from marketing phrase to useful trading primitive. But only if the architecture, costs, and failure modes are understood first.

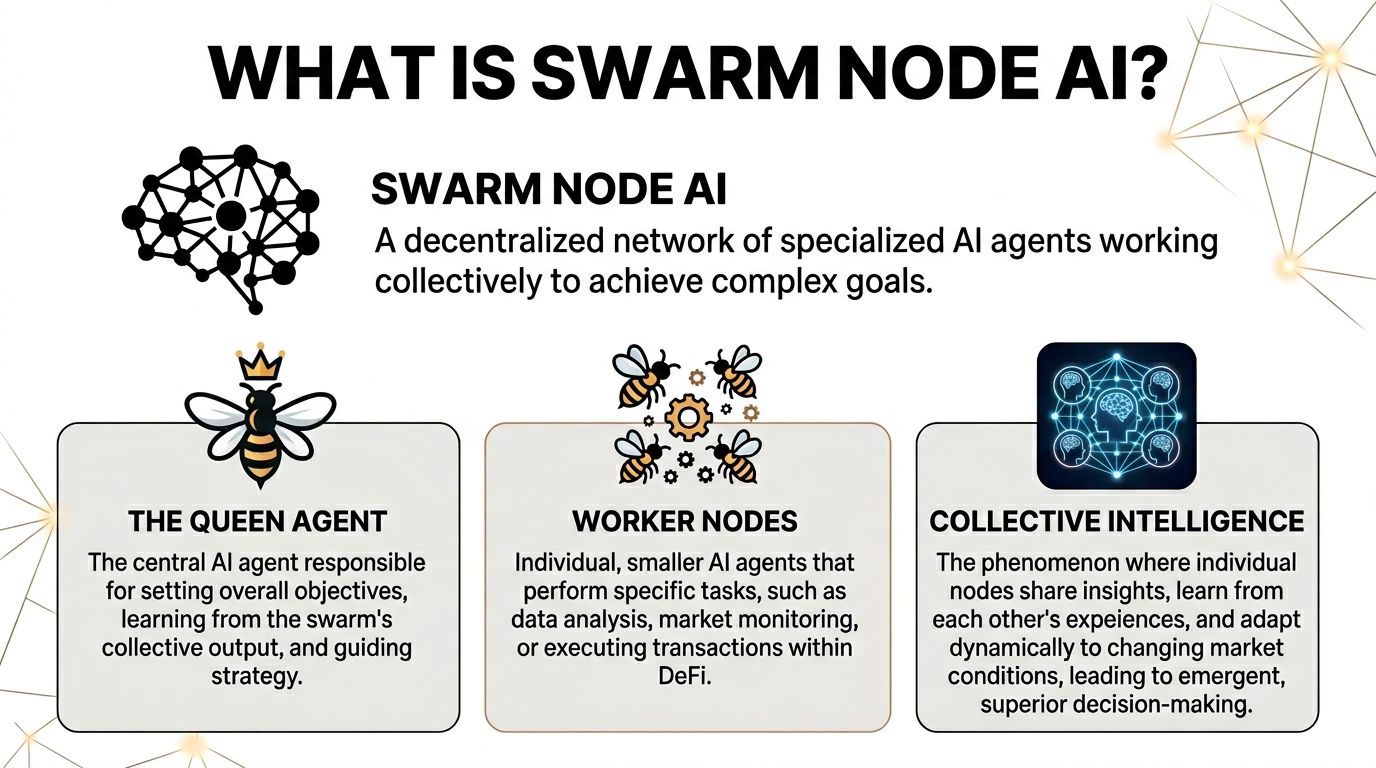

The cleanest way to understand swarm node ai is to stop thinking about one super-bot and start thinking about a colony.

A colony doesn’t have one worker doing everything. It has roles. One part scouts. Another transports. Another defends. The colony works because each unit handles a narrow job, then the combined behavior produces something bigger.

In trading terms, that colony model fits on-chain research well.

Think of a swarm as three layers.

| Layer | What it does in plain English | DeFi example |

|---|---|---|

| Queen agent | Sets the objective and assigns tasks | “Find early signs of a token launch worth watching” |

| Worker agents | Handle narrow sub-tasks | One scans contracts, one checks wallet ties, one reviews liquidity behavior |

| Shared memory | Holds what the swarm learns | Wallet labels, suspicious addresses, prior token interactions, thesis notes |

The queen agent doesn’t need to know everything. It needs to know who should do what next.

That’s different from a single monolithic AI prompt. With one model, you often throw a giant request at it and hope it reasons through every dependency. In a swarm, you break the problem apart.

Say the mission is: find high-conviction early entries in a crowded memecoin market.

A single generalist model might give you a broad answer. A swarm can split the task:

That’s a better fit for crypto because on-chain edge usually comes from connecting weak signals, not from one obvious metric.

The video below gives a visual feel for how multi-agent systems are framed in practice.

“Collective intelligence” sounds fluffy until you make it operational.

In a useful swarm, agents should do three things well:

That last part matters. If one agent claims a wallet cluster looks coordinated, another agent should test whether the pattern is real or just accidental overlap.

A swarm is useful when agents reduce uncertainty for each other. It’s useless when they multiply the same bad assumption.

If you remember one thing, keep this.

Swarm node ai is not one smarter brain. It’s a network of smaller specialists that can decompose, investigate, and recombine a research task.

That’s why traders care. The opportunity isn’t “AI replaces research.” The opportunity is that the machine can keep many narrow investigations running at once, then highlight only the setups that deserve human judgment.

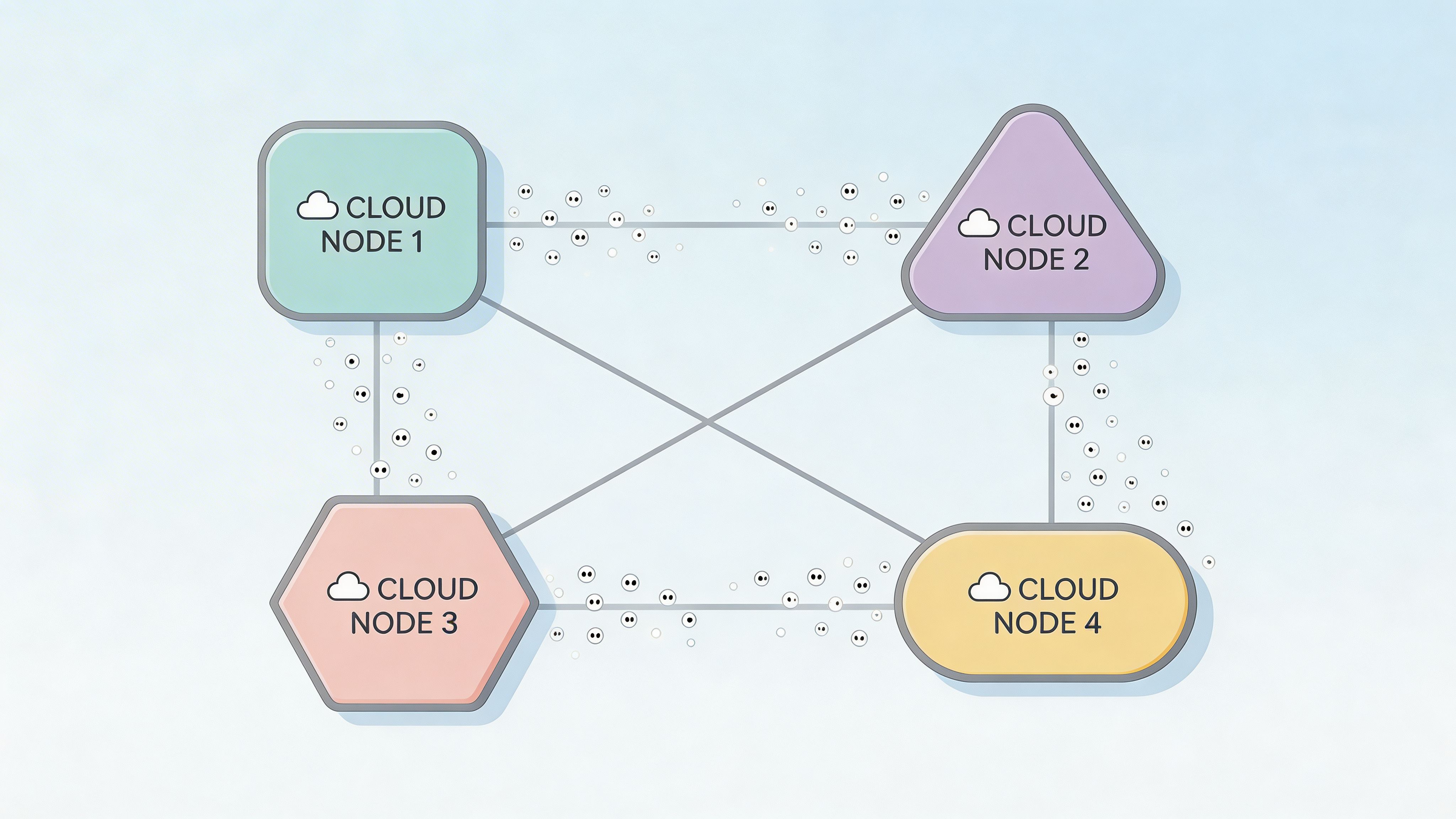

Under the hood, the interesting part of swarm node ai isn’t just the agents. It’s the execution model.

SwarmNode operates on a serverless architecture optimized for AI agent execution, where the platform handles automatic scaling, resource allocation, and database management. Users are charged for actual execution time rather than provisioned capacity, agents can hibernate after completing tasks, and a persistent storage layer lets agents process, store, and hand off data sequentially, according to the SwarmNode technical overview on IQ.wiki.

That sounds abstract, but for traders it changes the economics of running persistent research workflows.

Traditional bot infrastructure has a familiar problem. You pay to keep the machine alive, even when it’s waiting.

With a serverless setup, the ideal flow looks different:

That matters for on-chain monitoring because most of the day is inactivity punctuated by short bursts of importance. A deployer wallet funds a new address. A tracked wallet rotates sectors. A bridge transfer lands. You don’t want to pay for full-time infrastructure just to catch intermittent events.

The “hibernation” feature is one of the most practical architectural choices.

An agent doesn’t need to stay hot after finishing a task. It can sleep until another trigger arrives through the UI, REST API, or Python SDK, as described in the same IQ.wiki overview. That reduces idle waste and makes the platform better suited to event-driven research.

For a trader, that opens up several useful patterns:

A lot of “AI for trading” tools miss this. They optimize the prompt and ignore the compute model. In production, bad compute design ruins good ideas.

The persistent storage layer is what lets a swarm act like a system instead of a pile of isolated prompts.

One agent can gather transaction data. Another can enrich it with wallet labels. Another can append pattern notes. Another can produce a recommendation or risk flag. Because that state lives across the workflow, you get continuity.

That’s valuable for:

Without state, every agent call starts from scratch. With state, the swarm can build a chain of reasoning.

Operational insight: In DeFi, the hard part usually isn’t detecting one event. It’s preserving context across a series of related events without forcing a human to reassemble the story manually.

A normal cloud bot often feels like renting an office for a courier. The courier drops off one package, then the office sits there costing money.

Swarm-style serverless infrastructure is closer to hiring the courier only when a package needs delivery. That doesn’t make the courier smarter. It just aligns cost with use.

For advanced users building monitoring workflows, that’s the key distinction between toy agents and systems you might keep running.

A lot of crypto traders exploring agent frameworks should also study broader AI agents in crypto infrastructure, because the architecture determines whether the strategy scales cleanly or becomes an expensive science project.

If you were deploying a swarm for on-chain work, the stack would usually look like this:

| Component | Practical role | What to watch for |

|---|---|---|

| Trigger layer | Detects on-chain events worth waking the swarm for | Too many triggers create noise and cost |

| Orchestration layer | Decides which agent runs next | Bad routing creates contradictory outputs |

| Execution layer | Runs each specialized task | Latency matters when timing matters |

| Storage layer | Keeps shared state between agents | Dirty state leads to bad downstream analysis |

| Review layer | Produces a trader-readable output | Summaries must stay audit-friendly |

This architecture can work very well for monitoring and enrichment. It gets much shakier when traders expect perfect autonomous decision-making. That’s where the next set of trade-offs appears.

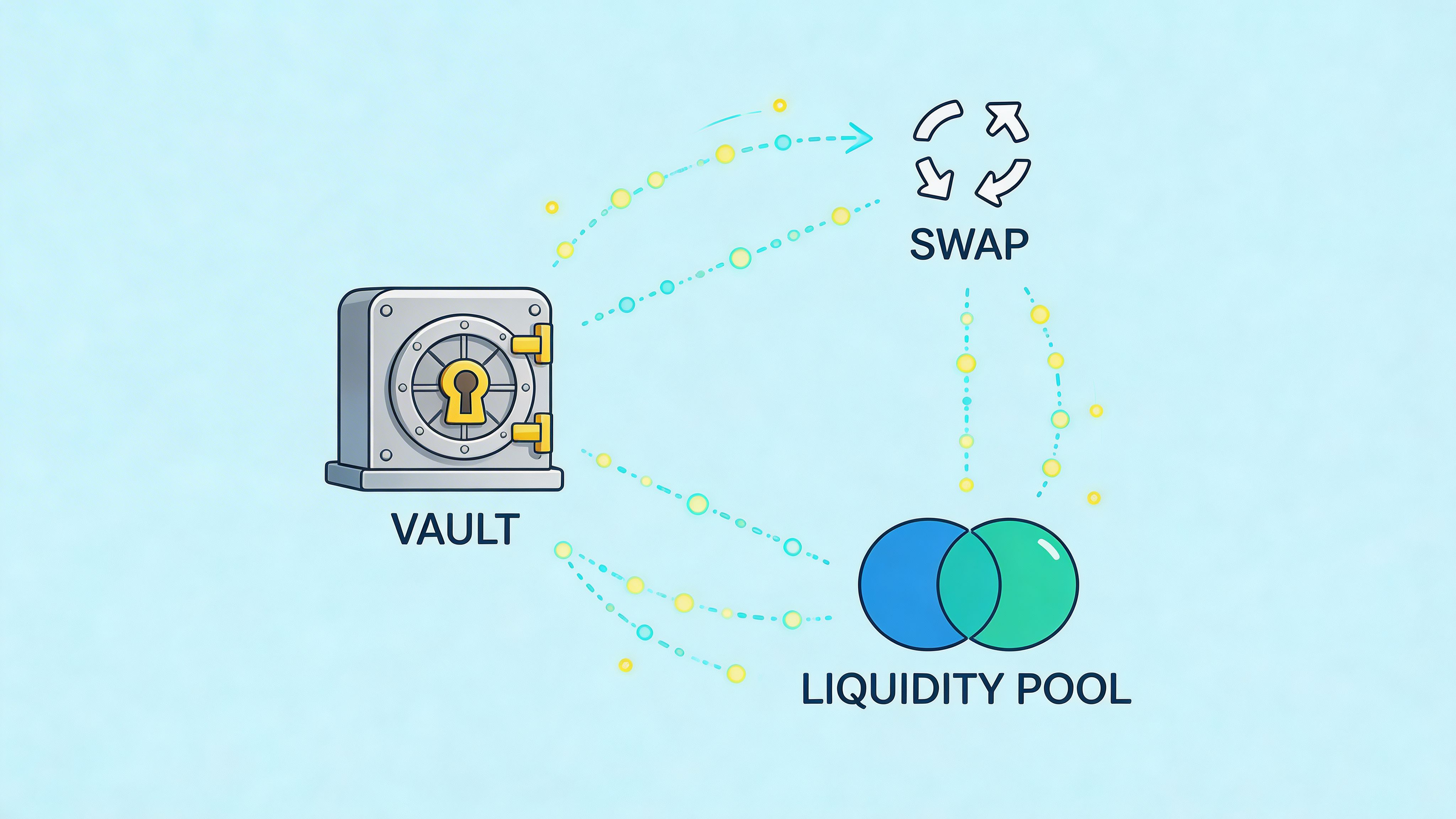

The best use cases for swarm node ai aren’t fully automated trading bots. They’re research accelerators.

That distinction matters. Traders lose money when they ask immature systems to make final decisions. They gain an advantage when those systems narrow the search space, enrich raw activity, and rank what deserves attention.

A swarm can shine here.

One agent watches contract deployments. Another checks who funded the deployer and whether the source wallet links to known trading clusters. A third reviews first-wave buyers. A fourth checks whether liquidity behavior looks organic or staged.

That gives you something far better than “new token launched.”

You get a structured question: Is this a random launch, a recycled team, a farm setup, or the beginning of a real speculative push?

A practical output might include:

Most wallet tracking setups stop at notifications. They tell you that a wallet bought or sold.

A swarm can push further by modeling style.

Instead of tracking one wallet in isolation, a set of agents can compare behavior across a cluster. One checks average hold style. Another scores aggressiveness on entries. Another studies whether exits happen into strength or after narrative decay. Another maps which sectors tend to get touched first.

That creates a much more useful result than copy trading by reflex. You start seeing how the wallet thinks, not just what it clicked.

Some of the highest-value research in DeFi happens after something weird already occurred.

A swarm can help when:

One agent traces the funding path. Another groups addresses by behavior. Another checks whether those addresses have interacted before. Another creates a readable incident timeline.

Here, a swarm often beats a solo analyst on speed. Not on judgment. On speed.

In forensic work, the edge comes from compressing investigation time. If the system gives you a cleaner map in minutes instead of hours, that alone can protect capital.

Low-cap trading kills people through skipped process.

A swarm can force discipline by giving separate agents distinct review jobs:

| Review lane | What the agent checks | Why it matters |

|---|---|---|

| Contract lane | Basic contract behavior and suspicious patterns | Filters obvious technical red flags |

| Wallet lane | Deployer and insider relationships | Finds recycled or linked actors |

| Liquidity lane | Pool setup and changes over time | Spots fragility or odd incentives |

| Narrative lane | Messaging consistency and claimed purpose | Filters obvious nonsense |

| Community lane | Whether activity looks coordinated or natural | Helps separate traction from theater |

This doesn’t replace human due diligence. It gives you a tighter first pass.

Most traders underuse AI on the after-action side.

Swarm systems are well suited to reviewing your own trades because the tasks can be split cleanly. One agent reconstructs timeline. Another measures whether your entry aligned with wallet activity. Another compares your exit to the later move. Another identifies where your thesis changed.

That’s a much better use of current agent systems than pretending they can trade unattended across every market regime.

The sweet spot for swarm node ai in DeFi is narrow but real.

It works best when the job is:

It works worst when traders expect clean autonomous execution in live, messy conditions where ambiguity matters more than speed.

A wallet finder tells you who moved. Swarm intelligence can help answer why this move matters.

That difference is the integration opportunity.

Most traders already have a list of wallets they respect. The bottleneck isn’t discovering another wallet. It’s interpreting patterns across many wallets without drowning in noise. A swarm layer can take a wallet-tracking workflow from reactive notification to active pattern analysis.

Say you follow a strong wallet that often rotates early into new sectors. A normal tracker can show buys and sells. A swarm can create a deeper profile around that behavior.

One agent can inspect timing. Another can compare position sizing across prior trades. Another can check whether the wallet tends to follow a funding source, front-run a narrative, or shadow a cluster. Another can look for nearby wallets with similar but not identical behavior.

That gives the trader a stronger question than “Should I copy this buy?”

The better question is: Is this wallet expressing a repeatable strategy, and are there adjacent wallets showing the same tell before the crowd notices?

For custom research, integration becomes particularly interesting.

Inside a wallet analysis stack, swarm-style jobs could be used for tasks like:

That’s close to what traders need. Not generic AI chat. Focused research jobs attached to concrete on-chain entities.

A broader view of this trend appears in work around agent tooling such as DeepSeek AI agent workflows in crypto research, where the key shift is from static dashboards to systems that can investigate a thesis on request.

A serious implementation would need guardrails.

The swarm shouldn’t be allowed to invent conclusions just because several agents agree with each other. It should expose reasoning steps clearly enough that a human can audit them.

The strongest product direction would be something like a research marketplace inside the analytics workflow:

The best integrations won’t feel like “AI added to a dashboard.” They’ll feel like hiring a junior analyst who never gets tired, but still has to show their work.

That’s the level where swarm node ai can become useful to real traders instead of staying a demo.

This is the part most project pages avoid.

The core issue with swarm node ai today isn’t whether it can produce impressive demos. It can. The issue is whether it stays reliable, cost-aware, and profitable when you run it against live DeFi conditions.

Existing coverage around Swarm Node AI has weak detail on node operator profitability and token economics. It also leaves major reliability questions open. A Gate Learn analysis notes a lack of specifics on APY and risk-adjusted returns, while also citing 30 to 50 percent inconsistency in architectural choices across runs and that complex financial analysis fails 20 percent more than single-agent systems due to handoff latency in this category of multi-agent setups, as summarized in Gate Learn’s discussion of Swarm Node and multi-agent limitations.

That should change how you evaluate the entire stack.

| Aspect | The Promise (Marketing) | Challenges in Implementation |

|---|---|---|

| Cost efficiency | Pay only when agents run, so costs stay lean | Variable execution pricing helps, but poorly scoped tasks and repeated handoffs can still create messy cost creep |

| Passive rewards | Node operators earn attractive income from participation | Public detail on ROI, token economics, and risk-adjusted returns is still thin |

| Better intelligence | More agents means better decisions | More agents can also mean more disagreement, duplicated effort, and drift |

| Reliability | Distributed systems are resilient by design | Handoffs can fail, context can degrade, and outputs can become inconsistent |

| DeFi readiness | Perfect for always-on trading analysis | Live financial workflows punish latency, ambiguity, and edge-case errors hard |

| Scalability | Easy to expand with more specialized agents | Scaling complexity often arrives faster than scaling quality |

A lot of traders assume adding more agents creates more certainty. It doesn’t.

It can create the opposite. If one agent interprets a wallet cluster as coordinated accumulation, another reads it as random overlap, and a third agent summarizes both badly, the system doesn’t become smarter. It becomes more confident-looking while staying wrong.

The handoff problem is especially important in DeFi because trading research is often sequential:

If one link in that chain loses context, the whole conclusion becomes unstable.

People hear “serverless” and assume cheap.

That’s directionally true compared with wasteful always-on infrastructure. But total system cost includes more than compute. It includes retries, duplicate analysis, false alerts, and on-chain execution overhead where applicable.

The ugly version of swarm economics looks like this:

That’s not alpha. That’s a cost center.

There’s also a security angle that gets less attention than it should.

The more tools and permissions you give a swarm, the more careful you need to be about:

For most traders, the safe answer is simple. Keep swarm systems on the analysis side, not the execution side, until the workflow proves itself under stress.

Don’t give a multi-agent system the keys to capital just because it writes convincing summaries.

This deserves blunt treatment.

Projects in this category often attract users who want passive rewards or node income. But the public material is usually much stronger on architecture than on operator economics. Without clear disclosure on emissions, demand drivers, reward sustainability, and how much usage is organic versus incentivized, “passive rewards” is just a narrative.

A trader should ask:

If those answers are soft, the node thesis is soft too.

For practitioners, the best current posture is conservative.

Use swarm node ai for:

Avoid over-reliance in:

That may sound less exciting than the marketing. It’s also how you stay solvent.

The market already gave one important lesson about this category.

SwarmNode.ai’s token SNAI reached an all-time high of $0.13 on January 4, 2025, then fell to roughly $0.00105 by April 2026, which placed it 99.33% below its all-time high according to CryptoSlate’s April 2026 reporting. The same source lists an all-time low of $0.00026 on January 9, 2026, with a rebound of 223.70% from that low by early April. CryptoSlate also reported a $1.05 million market capitalization, $111.92K in 24-hour trading volume, a total supply of 999.99 million SNAI, fully circulating supply, and 1.56K holders. It also noted planned product directions such as agent scheduling and a community agent library. Those details appear in CryptoSlate’s SwarmNode.ai market page.

That’s a full-cycle reminder in one chart. Early excitement around AI infrastructure can reprice hard in both directions. The technology can stay interesting while the token gets crushed.

The product side is more interesting than the speculative side right now.

Three developments matter:

| Trend | Why it matters to traders | What to watch |

|---|---|---|

| Agent scheduling | Better timing for recurring research jobs | Whether scheduling reduces noisy always-on workflows |

| Community agent libraries | Reusable agents could lower setup friction | Whether useful agents emerge beyond demos |

| Human-AI verification loops | Better guardrails for trading research | Whether outputs become easier to audit before action |

The community library idea is especially notable. If it matures, it could act like an an AI app store for narrow on-chain tasks. That would make swarm node ai more accessible to traders who can define a workflow but don’t want to engineer every component from scratch.

A good next move is small and testable.

Don’t start by buying the token and assuming usage will follow. Start by evaluating the workflow itself.

Use this checklist:

That process will tell you more than any promo thread.

Treat swarm node ai like a new research instrument, not a shortcut to effortless edge.

If the systems improve, the upside is real. A mature stack with strong scheduling, reusable agents, and clean human verification could become a serious layer in on-chain analysis. But today, the gap between demo quality and production reliability is still wide enough to matter.

The traders who benefit first won’t be the ones chasing every AI token. They’ll be the ones who use these tools to tighten process, reduce blind spots, and make fewer bad decisions in noisy markets.

If you want a practical way to turn wallet activity into tradeable research today, Wallet Finder.ai gives you a cleaner starting point. You can track profitable wallets, study complete trading histories, monitor entry and exit behavior, and build watchlists around real on-chain moves instead of guesswork. That makes it easier to test your own swarm-style research workflows on top of solid wallet intelligence rather than hype.